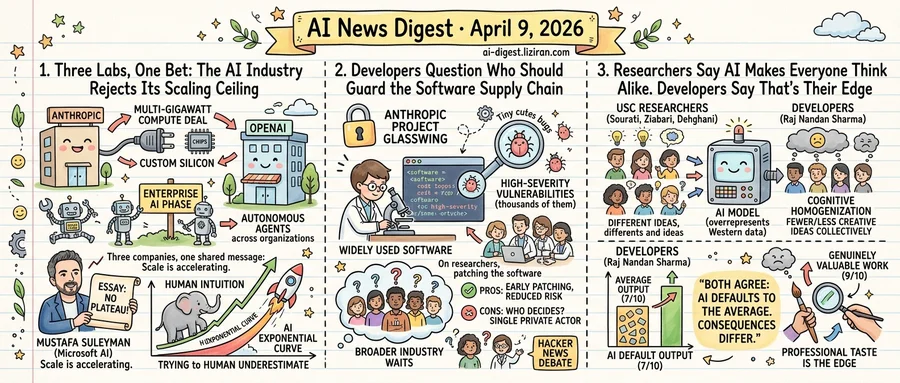

01Three Labs, One Bet: The AI Industry Rejects Its Scaling Ceiling

Anthropic signed a multi-gigawatt compute deal. Within the same week, OpenAI declared a new phase for enterprise AI. Microsoft's head of AI published an essay arguing the technology won't plateau. Three companies, three audiences, one shared message: scale is accelerating.

Each signal arrived through a different channel. Together they rebut the persistent claim that AI scaling has hit diminishing returns.

Anthropic's move is the most concrete. The company expanded its partnership with Google Cloud and Broadcom to secure multiple gigawatts of next-generation compute. Broadcom's involvement signals a custom silicon strategy, not generic GPU procurement. Designing application-specific chips requires years of lead time and billions in upfront capital. No company places that bet expecting diminishing returns from larger models.

OpenAI's signal came through its enterprise playbook. The company outlined what it calls "the next phase of enterprise AI," describing adoption as accelerating across industries. It positioned ChatGPT Enterprise, Codex, and company-wide autonomous agents as a unified product surface. Six months ago, this stack was primarily a chatbot interface. Now OpenAI is building toward agent-driven workflows that span entire organizations.

Suleyman's MIT Technology Review essay completed the trifecta. His core argument: human intuition evolved for linear progress and systematically underestimates exponential curves. The piece reads less as philosophy than as strategic positioning. Microsoft's head of AI chose this moment to publicly reject the plateau thesis. He wrote not for researchers but for the executives and investors who direct capital.

The timing matters because of what preceded it. For roughly a year, a counter-narrative gained traction: scaling laws were weakening and next-generation models were delayed. Bigger training runs weren't producing proportional capability gains. Industry observers questioned whether more compute would yield meaningful jumps. None of these three announcements directly addresses those critiques. They don't need to. Companies don't lock in gigawatts of custom silicon and expand into autonomous agents while publishing scaling manifestos if they believe the ceiling is near.

The skeptics may still prove right. But the capital allocation says the labs are betting they won't.

02Developers Question Who Should Guard the Software Supply Chain

The argument that consumed Hacker News wasn't about whether Claude Mythos is dangerous. It was about who gets to decide.

Anthropic's Project Glasswing restricts Mythos, a general-purpose model the company says has exceptional cybersecurity research abilities, to a small group of vetted security researchers. The stated rationale: Mythos Preview has already found thousands of high-severity vulnerabilities in widely used software, and the broader industry needs time to patch before a general release.

Simon Willison, whose technical assessments carry weight across the open-source community, called the restriction "necessary." He cited Anthropic's published system card, which details Mythos's capabilities, as proof of good faith. But his endorsement carried a qualifier that captured the wider unease: the arrangement depends entirely on trusting Anthropic's own evaluation of its own model.

That trust question generated 791 comments and 1,475 upvotes on Hacker News. The fault line was structural, not technical. Few disputed that a model finding thousands of production vulnerabilities poses real risks. The debate was over who controls the response.

The pragmatic case writes itself. Anthropic built the model, understands its capabilities, and moved preemptively rather than waiting for damage. Glasswing gives security teams early access to find and fix vulnerabilities before a broader release.

The objection is equally straightforward. An AI company deciding which vulnerabilities get found, by whom, and on what timeline places control over critical infrastructure security in a single private actor. No external body validated Anthropic's threat assessment or confirmed that restricted access is the right threshold. The company serves as both manufacturer of the risk and its self-appointed regulator.

Willison considered published transparency sufficient. For many in the thread, transparency without independent verification is disclosure, not accountability.

Glasswing sets a template. If the next frontier model with offensive capabilities follows the same playbook, the company identifies risk, restricts access, and picks the auditors. Software security becomes a function of corporate judgment with no external check.

03Researchers Say AI Makes Everyone Think Alike. Developers Say That's Their Edge

Individuals using AI chatbots generate more ideas on their own. Groups using those same tools produce fewer and less creative ideas collectively. That paradox anchors an opinion paper published March 11 in Trends in Cognitive Sciences by USC researchers Zhivar Sourati, Alireza Ziabari, and Morteza Dehghani.

Their argument: large language models train on data that overrepresents Western, educated, industrialized societies. When millions of people route their writing and reasoning through the same models, distinct linguistic styles and reasoning strategies converge. LLMs favor linear chain-of-thought reasoning, the team writes, crowding out intuitive and abstract approaches. Rather than crafting original responses, users defer to model suggestions. Agency shifts from person to machine.

"The concern is not just that LLMs shape how people write or speak," the paper states, "but that they subtly redefine what counts as credible speech."

A contrasting read gained traction in developer communities. Software engineer Raj Nandan Sharma argued that taste — the ability to distinguish generic output from genuinely valuable work — is now the scarcest professional skill. His logic inverts the researchers' warning. Because AI produces competent output on demand, the person who can reject the merely adequate becomes more valuable, not less. AI creates what Sharma calls a "crowded 7 out of 10 world." The edge belongs to those who see the difference between 7 and 9.

Both sides share a premise: AI defaults to the average. They split on consequences. Dehghani's team sees a force pulling everyone toward that mean, eroding the cognitive diversity that fuels collective problem-solving. For Sharma, the same averaging serves as a filter, revealing who has real judgment and who was always producing median work.

The fracture goes deeper than optimism versus pessimism. If homogenization degrades reasoning itself, the capacity for taste may be precisely what erodes first. Sharma concedes the risk: passive selection without authorship reduces humans to "a discriminator in a mostly machine-driven loop." The USC team argues that more diverse training data could preserve cognitive variety. Neither side has evidence the other is wrong.

OpenAI Publishes Child Safety Blueprint for AI Developers OpenAI released a framework for building age-appropriate safeguards into AI products, covering content filtering, age verification, and reporting mechanisms. The blueprint is aimed at third-party developers building on its APIs. openai.com

Claw-Eval Targets Blind Spots in AI Agent Benchmarks A new evaluation suite exposes three gaps in how autonomous agents are tested: opaque grading that ignores intermediate steps, weak safety checks, and narrow interaction coverage. Claw-Eval includes 300 human-verified tasks across real software environments. huggingface.co

ThinkTwice Trains LLMs to Solve and Self-Correct in Two Phases Researchers propose a training framework that alternates between solving reasoning problems and refining answers, using the same binary reward signal for both phases. The method improved accuracy across five math benchmarks without requiring external critique or correctness annotations. huggingface.co

Retrieval Systems Trained on Agent Behavior Outperform Human-Click Models A study retrains information retrieval models using LLM agent interaction logs instead of human click data. Agent-optimized retrieval performed better when embedded in multi-turn reasoning loops, where traditional ranking signals break down. huggingface.co

ACES Proposes Ranking-Based Selection for LLM-Generated Code and Tests When both candidate code and candidate tests may be wrong, counting test passes is unreliable. ACES uses a leave-one-out AUC method to rank code by test agreement patterns, sidestepping the circular dependency between code correctness and test correctness. huggingface.co

Video-MME-v2 Benchmark Exposes Gap Between Leaderboard Scores and Real Video Understanding Existing video understanding benchmarks are saturating, inflating model scores beyond actual capability. Video-MME-v2 introduces a three-tier evaluation hierarchy designed to test robustness and faithfulness rather than pattern matching. huggingface.co

Game Bug Benchmark Tests Whether LLMs Can Do QA Work GBQA ships 30 playable games containing 124 human-verified bugs at three difficulty levels. Current LLMs detected bugs in controlled settings but struggled with complex runtime interactions that require extended gameplay. huggingface.co

Memory Intelligence Agent Cuts Storage Costs for Long-Running Research Agents Deep research agents that store full reasoning trajectories face ballooning retrieval and storage overhead. MIA compresses past experiences into reusable patterns instead of raw trajectories, reducing memory costs while preserving reasoning quality. huggingface.co

LIBERO-Para Reveals VLA Robots Break When Instructions Are Rephrased Vision-language-action models fine-tuned on limited robotic data overfit to specific instruction wording. LIBERO-Para systematically varies action expressions and object references to measure brittleness, finding significant performance drops from simple paraphrases. huggingface.co