01Hackers Turned the Claude Code Leak Into a Malware Trap

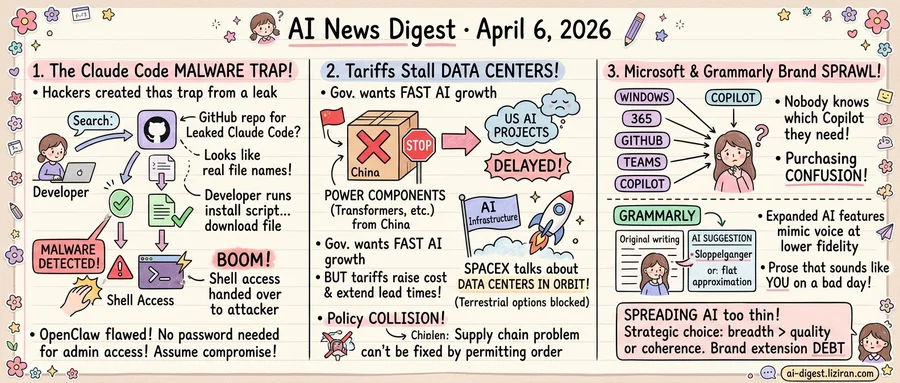

A developer finds a GitHub repository promising the leaked Claude Code source. The README looks right. File names match what security researchers described in public posts. So the developer clones it, runs the install script, and hands shell access to an attacker.

That sequence played out repeatedly in the days after Anthropic's Claude Code source surfaced online, according to Wired. Hackers repackaged the leaked files with embedded malware and distributed them across GitHub, Telegram, and developer forums. The poisoned bundles targeted the kind of person most likely to download them: developers curious enough about AI tooling internals to pull unverified code from strangers.

The social engineering is unusually precise. AI developers already run opaque agent tools that request broad system permissions. Downloading and executing source code from unofficial channels isn't a behavioral leap. It's a slight extension of what they do every workday. Attackers exploited that muscle memory. The bundled malware varied across distributions, Wired reported, but the vector stayed constant: clone a repo, run a script, hand over access.

The original leak didn't cause this damage directly. It created a distribution channel that attackers colonized faster than the security community could flag it. Each new blog post analyzing the source code drove more search traffic toward the poisoned repositories. Curiosity generated the audience. Attackers supplied the payload.

The Claude Code incident isn't isolated. OpenClaw, a popular open-source AI agent tool, separately disclosed a flaw that let unauthenticated attackers gain admin access to running instances. Ars Technica reported that exploitation required no credentials and no user interaction. Anyone who exposed an OpenClaw instance to the network gave remote attackers full control over the agent's execution environment. The publication advised affected users to assume compromise.

Two tools, two attack surfaces, one structural weakness: AI development software that assumes a trusted environment. The Claude Code leak created a poisoned supply chain. OpenClaw's flaw opened a runtime backdoor. Both show how fast the perimeter around AI developer tooling is dissolving.

The developer who cloned that repo wasn't careless. They were doing what developers do when interesting tools appear. Attackers now treat that curiosity as a reliable entry point, and the tooling ecosystem hasn't built the verification infrastructure to make caution easy.

02Nearly Half of US AI Data Center Projects Stall Under the Administration's Own Tariffs

Nearly half of planned AI data center projects in the US now face delays, according to Ars Technica. The bottleneck: power infrastructure components that overwhelmingly come from China. The same China targeted by the Trump administration's tariff regime.

The White House has framed AI infrastructure as a national security priority. Executive orders have fast-tracked permitting. Officials have cast the buildout as essential to staying ahead of China in the AI race. But the transformers, switchgear, and other electrical equipment needed to power these facilities depend on Chinese manufacturing. Tariffs on those imports have driven up costs and extended lead times, stalling the very projects the administration wants to accelerate.

The result is a policy collision with no obvious resolution. One arm of the government demands speed. The other raises the price of the materials that speed requires. Domestic alternatives exist in theory but not at the scale or timeline the AI boom demands. US transformer production was already backlogged before the tariffs; adding trade barriers to the primary external supplier has deepened the shortage.

How blocked is the ground path? Blocked enough that SpaceX has been seriously discussing putting data centers in orbit. TechCrunch reported that the company is exploring whether orbital compute infrastructure could help justify part of its valuation. The concept is technically speculative and years from viability. But a company with SpaceX's engineering resources treating it as a real conversation signals how constrained terrestrial options have become.

The contradiction is structural. The administration's AI ambitions require a physical buildout. That buildout requires components. Those components come from the country the administration is trying to economically isolate. No executive order on permitting fixes a supply chain problem created by a different executive order on trade.

Domestic manufacturing could eventually close the gap. But "eventually" operates on a timeline measured in years, not quarters. The AI companies writing billion-dollar checks for compute capacity are not waiting years. They are delaying projects, revising timelines, and, apparently, looking up.

03Microsoft and Grammarly Hit the Limits of AI Brand Sprawl

Microsoft now applies the "Copilot" label to products spanning Windows, Microsoft 365, GitHub, Dynamics 365, Power Platform, Azure, Edge, and Teams. A recent inventory by strategist Tey Bannerman attempted to catalog the full list and found the name attached to offerings with different pricing, different underlying models, and different target users. The connective tissue between them is a word, not a platform.

The inventory collected 784 points and 367 comments on Hacker News. Developers in the thread described a recurring client problem: nobody knows which Copilot they need, because Microsoft hasn't given them a way to tell. A brand designed to unify the company's AI efforts has instead become a source of purchasing confusion.

Grammarly is producing a different symptom from the same underlying condition. Stevie Bonifield at The Verge documented what she calls the "sloppelganger" effect: the tool's expanded AI features generate text that mimics a user's voice at lower fidelity. It built its reputation on catching errors. Now it offers to rewrite entire paragraphs, producing flat approximations of the user's own style. Users who accept the suggestions end up with prose that sounds like them on a bad day.

The surface problems differ. One company has a naming crisis; the other has a quality crisis. Both trace back to the same strategic choice: spreading AI assistance across every product surface before defining what each instance should do well. Microsoft chased breadth of branding; Grammarly chased breadth of capability. In both cases, per-touchpoint value thinned as the count grew.

Consumer goods companies named this pattern decades ago: brand extension debt. When one label covers too many products with too little differentiation, each new entry cheapens the rest. The AI industry is compressing that cycle into quarters instead of years. Boards expect AI presence across full product lines. Companies respond by stamping the label on everything and sorting out coherence later. The sorting-out phase has arrived.

Apple Approves Driver Enabling Nvidia eGPUs on Arm Macs Apple signed off on a driver from the tinygrad project that allows Nvidia external GPUs to work with Apple Silicon Macs. The move reopens a hardware path that Apple closed when it dropped Nvidia support during the Intel-to-Arm transition. twitter.com

Japan Deploys Physical AI Robots to Fill Chronic Labor Shortages Japan is moving experimental robotics from pilot programs into production roles across industries that can't attract human workers. The country's aging population and shrinking workforce have made it a testing ground for physical AI at scale. techcrunch.com

Suno's Copyright Filters Fail to Block Protected Music The Verge found that Suno's content moderation system, which is supposed to prevent users from generating copyrighted songs, fails repeatedly. Users can reproduce recognizable versions of protected tracks despite the platform's stated policy against copyrighted material. theverge.com

Google Adds Gemini-Powered Trip Planning to Maps Google Maps now uses Gemini to generate full day itineraries from natural-language prompts. The feature assembles stops, routes, and timing into a single plan within the Maps interface. theverge.com