01Anthropic Built Its Most Powerful Model, Then Locked It Away

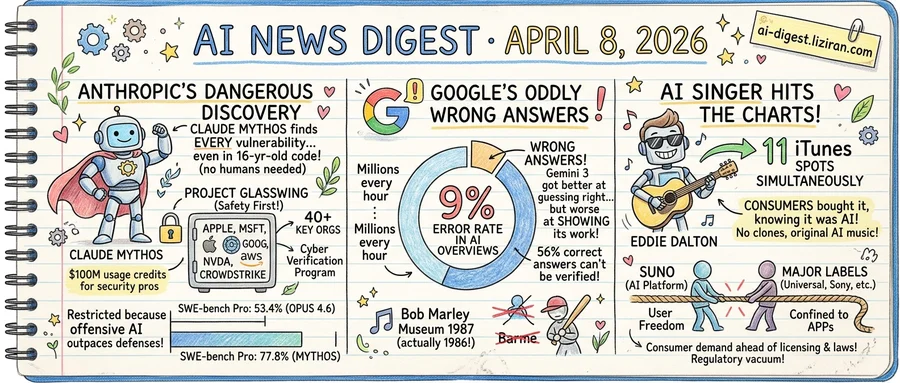

A bug in FFmpeg's video encoding code survived sixteen years and five million automated test runs. Claude Mythos, Anthropic's unreleased frontier model, found it without human guidance.

That discovery was one of thousands. During internal testing, Mythos identified zero-day vulnerabilities in every major operating system and web browser. The model chained Linux kernel flaws into full privilege escalation and found a 27-year-old OpenBSD TCP bug enabling remote crashes. On Firefox alone, it produced 181 working JavaScript exploits. Claude Opus 4.6, Anthropic's current flagship, managed two.

Anthropic's response was not to ship it.

Instead, the company announced Project Glasswing on Monday. The framework restricts Mythos to twelve partners: Apple, Microsoft, Google, Nvidia, AWS, and CrowdStrike, among others. Roughly forty additional organizations building critical infrastructure also get access. Security professionals can apply through a new Cyber Verification Program. Anthropic committed $100 million in usage credits for participants and $4 million to open-source security organizations including the Linux Foundation and Apache Software Foundation.

The decision carries real cost. Mythos scores 77.8% on SWE-bench Pro versus 53.4% for Opus 4.6, and 93.9% on SWE-bench Verified versus 80.8%. Those gains span general-purpose coding, not just security. Partners pay $25 per million input tokens and $125 for output. By restricting access, Anthropic forfeits the broader market for what would be its most capable commercial model.

The company's reasoning: offensive capabilities outpace current defenses. Mythos operates largely autonomously, finding and exploiting vulnerabilities without human steering. Releasing it before the software industry patches what it finds would arm attackers first.

Greg Kroah-Hartman, a senior Linux kernel maintainer, described the shift bluntly: "Something happened a month ago, and the world switched. Now we have real reports." Curl creator Daniel Stenberg confirmed receiving reports that were "really good." For Simon Willison, the developer and AI commentator, the restriction "sounds necessary to me."

Not everyone agrees. The Hacker News threads drew over 800 comments across two posts. Critics questioned whether Anthropic was manufacturing scarcity and whether restricted access creates a two-tier security system. The company published Mythos's full system card alongside the announcement, detailing capabilities and benchmarks. Some read that transparency as strategic: proving the threat justifies the lockdown.

Anthropic says it plans safeguards for eventual broader deployment. It has not set a date.

02Google's AI Overviews Deliver Millions of Wrong Answers Per Hour, Testing Shows

Google's AI Overviews answer questions incorrectly 9% of the time, according to an analysis of 4,326 searches conducted by AI startup Oumi on behalf of the New York Times. The company processes over 5 trillion searches per year. At that volume, a 9% failure rate produces tens of millions of wrong answers every hour.

The testing used SimpleQA, an industry-standard factual accuracy benchmark, and ran in two rounds. In October 2025, with Gemini 2 powering AI Overviews, accuracy sat at 85%. By February, after an upgrade to Gemini 3, it reached 91%. That six-point gain came with a tradeoff: 56% of correct answers could not be verified through the sources Google cited, up from 37% under Gemini 2. The model got better at guessing right while getting worse at showing its work.

The errors are concrete. AI Overviews listed the Bob Marley Museum as opening in 1987 instead of 1986. For Yo-Yo Ma, it linked to the correct Classical Music Hall of Fame page, then stated no induction record existed. Dick Drago's death entry carried the right age but the wrong date. None were adversarial prompts or trick questions.

Google disputes the findings. Spokesperson Ned Adriance called the methodology flawed, saying SimpleQA "contains incorrect information" and doesn't reflect typical search patterns. Google has not published its own accuracy benchmarks.

The accuracy question runs alongside a subtler concern. Research published in March 2026 in Trends in Cognitive Sciences, led by USC Dornsife psychologist Morteza Dehghani, argues that LLM outputs show less variation than human writing. Those outputs reflect the language and values of Western, educated, industrialized societies. "The concern is not just that LLMs shape how people write or speak, but that they subtly redefine what counts as credible speech, correct perspective, or even good reasoning," said co-author Zhivar Sourati.

A search engine wrong 9% of the time and uniform 100% of the time poses a problem accuracy gains alone cannot solve.

03AI Singer Holds Eleven iTunes Spots as Label Licensing Talks Stall

Apple's iTunes singles chart now features an openly artificial artist. Eddie Dalton, whose creators disclosed that every track is AI-generated, holds eleven positions simultaneously. No cloned vocals, no impersonation of existing musicians. Consumers bought the songs knowing what they were, pushing them past human competition on a major storefront.

The Financial Times reported that Suno, the leading AI music generation platform, has reached an impasse in licensing talks with Universal Music Group and Sony Music Entertainment. Universal wants AI-generated tracks confined inside apps, never distributed on streaming or download platforms. Suno wants users to share what they create wherever they choose. The talks have produced no resolution.

The two signals point to one structural gap. Consumer demand and distribution infrastructure accepted AI music before the commercial framework caught up. iTunes applies no distinction between human and AI-generated tracks in its chart rankings. No statute governs royalties on AI-original compositions, and no license template covers the chain from generation to storefront. The product shipped into a regulatory vacuum, and the vacuum is filling with revenue.

Dalton's chart presence is legally distinct from earlier incidents involving AI-cloned vocals of real musicians. Those cases triggered right-of-publicity claims because they involved deception and unauthorized likeness use. Dalton competes transparently, which places it in territory where no existing legal framework applies. Impersonation has a remedy. Original AI creation does not.

The stalemate carries outsize consequences. Universal and Sony together control roughly 60% of global recorded music revenue, giving them effective veto power over any industry-wide AI licensing deal. Without their agreement, Suno operates under ongoing legal risk. Every AI track that reaches a public chart sharpens the conflict: labels see distribution they cannot govern, while Suno sees demand it cannot fully monetize. The longer negotiations drag, the more the market moves without rules.

Anthropic Partners with Google and Broadcom for Multi-Gigawatt Compute Buildout Anthropic expanded its partnership with Google Cloud and Broadcom to secure multiple gigawatts of compute capacity. The deal spans custom chip development and large-scale infrastructure deployment. anthropic.com

Ars Technica Publishes New Sam Altman Profile Ars Technica released a profile of OpenAI CEO Sam Altman framed as an examination of AI industry leadership at large. The piece uses Altman as a case study for broader questions about how major AI companies and their founders operate. arstechnica.com

GrandCode AI System Reaches Grandmaster Level in Competitive Programming Researchers released GrandCode, a multi-agent reinforcement learning system for competitive programming contests. It claims to surpass Google's Gemini 3 Deep Think, the previous best AI result, which placed 8th without live competition constraints. huggingface.co

Gary Marcus Scrutinizes Medvi, the Self-Described "$1.8 Billion AI Company" Gary Marcus published an investigation into Medvi, which bills itself as the first $1.8 billion AI company. The piece argues that AI plays a smaller role in the business than its branding suggests. garymarcus.substack.com

Spotify Extends AI-Powered Playlists to Podcast Discovery Spotify expanded its Prompted Playlists feature to podcast recommendations for Premium subscribers. The tool, in beta since December for music, generates custom discovery feeds from natural-language prompts. theverge.com

TriAttention Shrinks KV Cache Memory for Long Reasoning Chains TriAttention compresses key-value caches using trigonometric properties of rotary position embeddings. The method works in pre-RoPE space, avoiding position-dependent distortions that degrade top-key selection during extended reasoning. huggingface.co

MinerU2.5-Pro Shows Document Parsing Bottleneck Is Data, Not Architecture The MinerU2.5-Pro team found that top document parsers across different architectures fail on identical hard samples. Their fix: systematic training data engineering rather than model design changes. huggingface.co

InCoder-32B-Thinking Targets Chip Design and Embedded Systems Code InCoder-32B-Thinking is a 32B model trained with error-driven chain-of-thought synthesis for industrial code domains. It generates reasoning traces for chip design, GPU optimization, and embedded systems where expert training data is scarce. huggingface.co

MIT Technology Review Argues for Agent-First Workflow Design MIT Technology Review published a case for redesigning business processes around AI agents from the start. The piece contends that grafting agents onto legacy workflows limits their ability to execute end-to-end autonomously. technologyreview.com

AURA System Enables Real-Time Question Answering Over Live Video Researchers introduced AURA, a video language model built for continuous observation and open-ended Q&A on live streams. Current video LLMs handle offline clips but struggle with ongoing feeds that require persistent attention and timely responses. huggingface.co