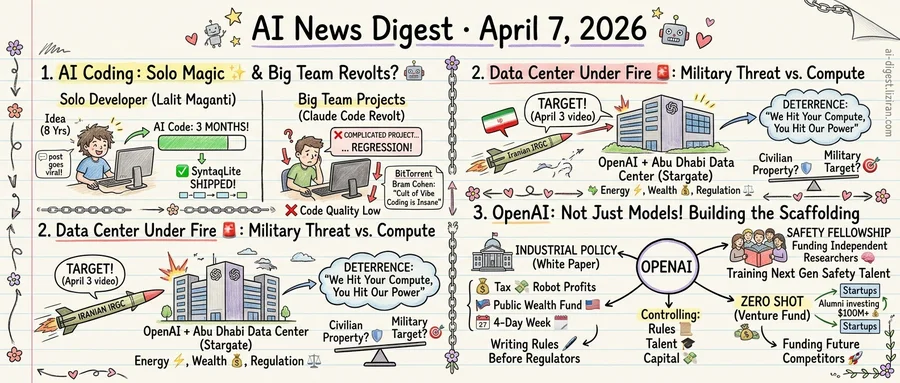

01A Developer Shipped His Eight-Year Project in Three Months with AI. That Week, Claude Code Users Revolted.

Lalit Maganti spent eight years wanting to build SyntaqLite, a SQL query engine for SQLite databases. He had the domain expertise but lacked the full-stack skill and free time. Working with AI coding tools, he shipped it in three months and wrote about the experience. The post went viral, drawing hundreds of comments from developers on Hacker News.

The story resonated because it matched a feeling many developers share: AI as a force multiplier for solo builders tackling projects they'd otherwise abandon. Maganti's post read as proof of concept for anyone with domain expertise but limited bandwidth. Not production infrastructure. Just the thing he'd always wanted to build.

The same week, a different signal arrived. A GitHub issue filed against Anthropic's coding agent Claude Code accumulated 667 Hacker News points and 427 comments. Fewer than 2% of HN posts ever clear 500 points. Users reported that recent updates had degraded the tool for complex engineering tasks. Complaints centered on lower code quality, failure to follow existing project conventions, and outputs requiring more human correction than the original work. Several users described the regression as severe enough to halt adoption on team projects.

Then Bram Cohen weighed in. The BitTorrent inventor published "The Cult of Vibe Coding is Insane," calling the practice dogfooding run amok. Cohen argued that developers building AI coding tools test those tools by using AI coding, producing a closed feedback loop where enthusiasm substitutes for rigor. In his framing, the tools' weaknesses become invisible to the people with the most influence over fixing them.

Cohen's critique and the Claude Code complaints pointed at the same fault line. AI coding tools perform well on greenfield projects with limited scope. A solo developer can iterate quickly, accept rough edges, and course-correct by hand. But maintaining a large codebase requires the tool to understand existing architecture, follow constraints, and produce code that survives review. Those are different problems.

Maganti's success story and the Claude Code revolt aren't contradictory. They describe two use cases wearing one label. Both reached the top of Hacker News the same week, from the same community. What separated them wasn't optimism versus pessimism. It was project scope.

02Iran's Military Names OpenAI's Abu Dhabi Data Center as a Missile Target

Iran's Islamic Revolutionary Guard Corps published a video on April 3 identifying OpenAI's planned Stargate data center in Abu Dhabi as a retaliatory strike target. The video, posted to an Iranian state-backed news outlet's X account, states the IRGC would hit the facility if the United States attacks Iranian power plants. A sovereign military force has now publicly designated a specific AI infrastructure project for potential destruction.

The video was specific. It did not gesture broadly at American interests in the Gulf: the IRGC named the Stargate project and the city of Abu Dhabi. According to TechCrunch, Iran says it will target US-linked data centers with new missile capabilities as the standoff between Washington and Tehran escalates.

The Stargate initiative represents one of the largest planned concentrations of AI compute outside US borders. OpenAI and its partners have positioned Abu Dhabi as a key node in their international buildout. That decision followed a logic familiar across the industry: Gulf states offer cheap energy, favorable regulation, and sovereign wealth capital. Hyperscale data centers consolidate where power is abundant and politics are friendly.

That logic carries a military corollary. A single facility housing billions of dollars in GPU clusters is an efficiency optimization and a single point of failure. Power plants have been wartime targets for decades. Data centers have not. The IRGC video suggests that distinction is ending.

Iran frames the threat as deterrence: strike our power grid, we strike your compute. Washington and its partners treat Gulf AI buildouts as commercial ventures beyond the scope of military conflict. Both positions rest on boundaries that have not been tested.

GPU clusters, cooling systems, and fiber links have been treated as civilian commercial property. No country has struck a major data center in a military operation. That status hasn't changed. The conversation around it has.

03OpenAI Pushes Into Policy, Talent, and Venture Capital in a Single Week

A robot tax. Public wealth funds. A four-day workweek. OpenAI's new industrial policy white paper reads less like a corporate blog post and more like a governing agenda. The company published it the same week it announced a safety research fellowship and its alumni revealed a venture fund targeting $100 million. Three moves, none of which ship a model.

The white paper proposes taxing AI-driven corporate profits to fund public wealth accounts for every American. It calls for expanded safety nets for displaced workers and a shorter standard workweek as automation absorbs labor hours. According to TechCrunch, the document blends redistribution with capitalism, positioning the proposals alongside active congressional debates. OpenAI frames these as "people-first" ideas. In practice, the company is writing regulatory architecture before regulators do.

The Safety Fellowship, also announced last week, funds independent alignment research outside OpenAI's own labs. Its pilot targets early-career researchers working on problems that OpenAI itself has defined as priorities. The company describes the program as building the next generation of safety talent. Researchers trained under that framing carry it into academia, government advisory roles, and competing organizations.

Then there's Zero Shot. The venture fund, staffed by OpenAI alumni, has already written checks to undisclosed startups. It aims to raise $100 million for its first fund, according to TechCrunch. Former employees directing early-stage capital means OpenAI's technical preferences propagate even when portfolio companies don't build on its APIs. The fund creates a financial orbit without requiring direct corporate investment.

Each initiative targets a different surface: who writes the rules, who trains the next safety cohort, who funds the next company. Regulation tends to favor the drafter. The funder of safety research defines what counts as safe. Alumni with venture capital pick which competitors get built.

The connecting thread is positional. OpenAI is constructing institutional scaffolding around the AI industry while its competitors focus on what goes inside it.

Generalist Robots GEN-1 Model Reaches 99% Task Reliability GEN-1 handles tasks from folding boxes to repairing vacuums, responding to mid-task disruptions and improvising moves outside its training data. The model reaches production-grade success rates across diverse physical manipulation tasks. arstechnica.com

ChatGPT Adds Direct Integrations with DoorDash, Spotify, Uber, and Others OpenAI opened ChatGPT to third-party app integrations, letting users order food, book rides, and control music within the chat interface. Canva, Figma, and Expedia are also among the launch partners. techcrunch.com

Spain's Xoople Raises $130M Series B to Build AI-Ready Earth Maps Xoople will use the funding to map Earth's surface for AI applications using its own satellite constellation. The company signed a deal with L3Harris to build the sensors for its spacecraft. techcrunch.com

Google Releases Offline-First AI Dictation App on iOS The app runs Gemma models locally, requiring no internet connection for transcription. Google is targeting the niche occupied by Wispr Flow and similar voice-to-text tools. techcrunch.com

Alibaba's Accio Reshapes Product Decisions for Small Online Sellers Small e-commerce operators now use AI to identify which products to manufacture and sell. The tool analyzes market signals to guide sourcing and design decisions for independent brands. technologyreview.com

Self-Distilled RLVR Lets One Model Serve as Both Teacher and Student A new training method combines on-policy self-distillation with reinforcement learning from verifiable rewards. The model generates its own dense training signals instead of relying on a larger teacher, matching or exceeding standard distillation results. huggingface.co

Sliding-Window Baseline Matches Complex Streaming Video Models SimpleStream feeds only the most recent N frames to a standard vision-language model and matches or beats 13 published streaming video methods on OVO-Bench and StreamingBench. The finding challenges the assumption that long-video understanding requires specialized memory architectures. huggingface.co

MIT Technology Review Identifies Key Missing Metric in AI Jobs Debate Most AI-and-employment analyses rely on surveys or economic models, not direct measurement. The piece argues one specific data point — task-level time tracking across occupations — would ground the debate in observable change rather than speculation. technologyreview.com

Developer Open-Sources GuppyLM, a Minimal LLM Built for Learning GuppyLM is a small, readable language model designed to teach how transformers work. The project strips away production complexity to expose core mechanics. github.com