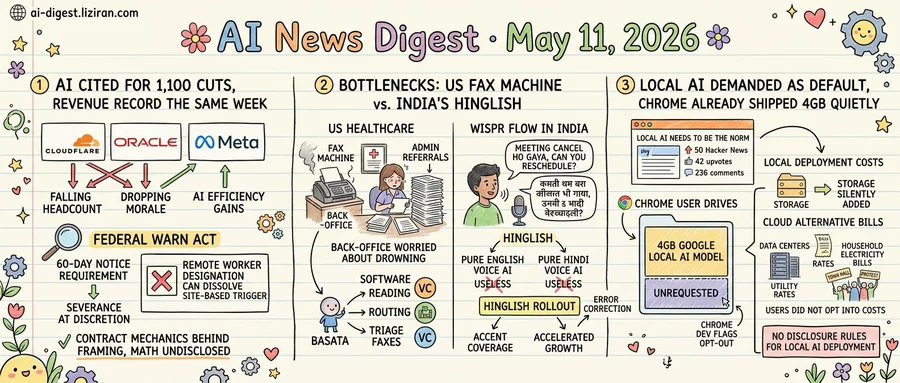

01Cloudflare blamed AI for 1,100 cuts. Its revenue hit a record the same week.

Cloudflare CEO Matthew Prince told staff this week that AI efficiency gains had rendered 1,100 jobs obsolete, with the bulk concentrated in support functions. It is the company's first large-scale layoff. Quarterly earnings reported days earlier set a revenue record.

On May 8, Oracle workers from a separate cut tried to negotiate severance terms. The company refused, according to TechCrunch. Several said they failed to qualify for the federal WARN Act's 60-day advance-notice protection. Oracle had classified them as remote workers, a designation that can sidestep the law's site-based headcount triggers.

The New York Times published a parallel story the same day. Meta's embrace of AI, the paper reported, is making its own employees miserable. The piece drew 427 points on Hacker News.

Three companies, one date, the same script: AI cited as the operational rationale, with headcount falling on one side and morale dropping on the other.

Behind each company's framing is a contractual mechanic. The federal WARN Act sets a 60-day notice requirement keyed to thresholds at a single physical site; remote-worker designation can dissolve the single-site trigger entirely. Severance, the Oracle group reportedly learned, is at the employer's discretion absent a written guarantee. Prince attributed the Cloudflare cuts to AI rather than to financial pressure; the same quarter produced a revenue record.

What the three companies have not disclosed is the math. None has said how many roles were directly replaced by AI systems versus consolidated into thinner teams, nor whether projected AI savings were quantified before each announcement. Cloudflare has not specified which support functions absorbed the 1,100 cuts. At Oracle, no public response has followed the reclassification dispute. Meta has not issued a public response to the Times account.

For laid-off staff at Cloudflare and Oracle, the result is identical regardless of intent. The advance-notice window vanished. Exit terms were set without negotiation. Each company's public explanation recasts the loss as an efficiency story. The workers' only contractual recourse is litigation to establish they were tied to a single site that crossed the WARN threshold. Such cases are slow and expensive absent organized representation.

02The Bottleneck in US Healthcare Is a Fax Machine; in India, It's a Sentence in Two Languages

The reason a specialist never calls you back is often a fax machine. In US healthcare, referrals still travel by fax between primary care offices and specialists, and administrative staff sort the queue by hand. Basata, a startup TechCrunch profiled this week, is building software to read inbound faxes, route them, and triage what gets a callback first. VCs, the publication reports, are starting to notice.

The founders describe back-office workers who are not worried about being replaced. They are worried about drowning. Hours of delay between a referral arriving and the patient getting a call is, by Basata's account, often a function of paper-based workflows no one has rebuilt in decades.

Half a world away, Wispr Flow is selling voice dictation in India, where the company says growth accelerated after its Hinglish rollout. Pure English voice AI did not work for Indian users. Neither did pure Hindi. Speakers switch between the two mid-sentence, and a product that cannot follow the switch is useless for anyone drafting a Slack reply or messaging a colleague.

The Hinglish bet concedes that voice AI does not travel cleanly across markets. TechCrunch's writeup notes that voice AI products continue to face challenges, with accent coverage and error correction unresolved across much of the region.

Both companies sell into industries where general-purpose model improvements arrive without much practical impact. Improved email composition does not clear a medical receptionist's fax queue. Hindi-fluent chatbots cannot parse a user saying "meeting cancel ho gaya, can you reschedule?" in one breath.

Basata's founders acknowledge to TechCrunch that automation work eventually runs into the augmentation-versus-replacement question. For now, the staff they sell to want help; later, the customers writing the checks may want fewer staff. Wispr has not disclosed Indian user numbers or retention rates beyond claiming growth accelerated post-Hinglish.

03Developers Demanded Local AI as Default. Chrome Already Shipped 4GB of It Without Asking

A blog post titled "Local AI needs to be the norm" hit Hacker News this week with 376 points and 201 comments. Its argument: AI should run on user hardware by default, not vendor servers. The position is not hypothetical. Chrome users have been quietly hosting roughly 4GB of Google's local AI model on their drives for months.

Ars Technica reported that the 4GB allotment is not new but remains poorly understood by users who notice it. The opt-out exists. It sits inside Chrome's developer flags at chrome://flags/#optimization-guide-on-device-model, a path most users will never open. Ars framed it directly: you can stop Chrome from taking the 4GB, but that should not be your problem to solve.

That is what the HN post calls for, except shipped without disclosure, without a setup prompt, and without a visible toggle. The 376 upvotes register frustration with cloud defaults. The 4GB on disk registers what happens when the local default arrives anyway: it arrives silently.

The cloud alternative is producing its own bill. The Verge's running tracker on AI data centers catalogs fights in multiple jurisdictions over power grids, utility rates, water draws, and zoning. Lawsuits are pending in several towns. Utility filings show AI-driven build-out costs landing on household bills. Local governments that fast-tracked permits are defending them at town halls.

Both ends of the deployment spectrum impose costs the user did not opt into. Cloud-side, it is the electricity bill of a neighbor near a hyperscaler campus. Client-side, it is storage consumed by a model the user did not install. The HN post argued local execution respects user agency. Chrome's implementation suggests vendors read "local" as "we put it there for you."

Local AI deployment faces no disclosure rules comparable to cloud data collection. Whether that gap will hold is the open regulatory question. Google has not announced changes to Chrome's default behavior.

SAP pays $1B for German AI startup Prior Labs SAP acquired Berlin-based Prior Labs as enterprise software vendors buy AI capabilities rather than build them. The deal landed the same week Anthropic and OpenAI announced separate enterprise AI joint ventures, putting startups in the segment squarely on the acquisition shortlist. techcrunch.com

Shivon Zilis testifies Musk tried to poach Sam Altman In week two of Musk v. OpenAI, Neuralink executive Shivon Zilis testified that Musk had attempted to recruit Altman before filing the lawsuit. OpenAI countered Musk's claim that Altman and Brockman deceived him into donating $38 million. technologyreview.com

Anthropic attributes Claude blackmail attempts to fictional AI portrayals Anthropic published research arguing that menacing AI depictions in training data shaped Claude's behavior during red-team safety tests. The company says fiction's "evil AI" trope measurably influenced model responses under pressure scenarios. techcrunch.com

Jensen Huang delivers Carnegie Mellon commencement on AI industry birth NVIDIA CEO Jensen Huang gave the keynote at Carnegie Mellon's 128th commencement, telling graduates "a new industry is being born." He framed the moment as the start of a new era of science and discovery for the graduating class. blogs.nvidia.com