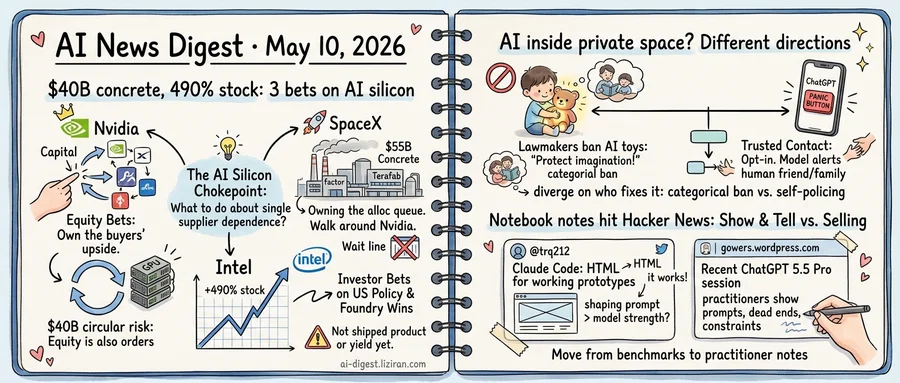

01$40B in equity, $55B in concrete, 490% in stock: three opposite bets on the AI silicon chokepoint

Nvidia has committed $40 billion to equity stakes in AI companies this year, according to TechCrunch's tally. SpaceX filed a public hearing notice in Grimes County, Texas this week disclosing plans to spend at least $55 billion on a chip plant called Terafab outside Austin. The figures come from New York Times and CNBC reporting on the filing. Intel's stock is up 490% over the past year, a move TechCrunch called "running well ahead of the company's actual turnaround."

Three companies, three answers to the same question: what do you do when a single supplier sits between you and the AI buildout?

Nvidia's answer is to lock the demand side. The $40 billion in equity checks converts customer relationships into balance-sheet positions. Whatever the GPU buyer does next, Nvidia owns a slice of the upside. The risk is circularity: when the same companies receiving Nvidia equity also place Nvidia GPU orders, the line between revenue and recycled investment blurs. Every quarter the equity total grows faster than the named customer base.

SpaceX takes the opposite tack: walk around Nvidia entirely. The $55 billion Terafab figure puts it in TSMC-fab territory on cost, and the Grimes County filing names a specific site. Owning a fab means owning the allocation queue rather than waiting in it. The cost is execution risk on two fronts. Greenfield fab builds run for years before first wafers ship, and SpaceX has no prior foundry operating record.

Intel's 490% reflects a third position. The stock move prices in a scenario where U.S. policy backs a non-TSMC, non-Samsung leading-edge fab enough to make one commercially viable. Intel's actual revenue and yield disclosures have not moved nearly that fast. The valuation rests on policy support and anchor foundry customer wins, not on shipped product.

The three bets disagree on where the chokepoint sits. Nvidia treats it as customer access. SpaceX treats it as fab capacity. Intel's investors treat it as geographic and political concentration. All three can be right at once, and the next public foundry capacity announcement will show which framing buyers actually fund.

02Lawmakers move to ban AI toys the same week OpenAI hands ChatGPT a panic button

Two responses to AI inside private space arrived in the same week, pointing in opposite directions.

State legislators are drafting bans on AI-enabled children's toys, according to an Ars Technica report on the connected-companion category. The reporting describes lawmakers worried that chatbot-driven plush toys and dolls are interfering with make-believe play and bedtime stories — territory previously occupied by parents and a child's own imagination. The proposed response is removal: pull the products off shelves before the behavioral data is in.

OpenAI moved the other way. The company released Trusted Contact, an opt-in ChatGPT feature that lets adult users designate someone — a family member, a friend — to be notified if the system detects what OpenAI calls "serious self-harm concerns." The user picks the contact in advance. The model decides when the threshold is crossed. The contact gets a message. OpenAI says the feature is optional and frames it as an extra layer rather than a replacement for emergency services.

The two approaches start from the same premise. AI is now inside conversations that used to happen between a child and a stuffed animal, or between an adult and no one at all. They diverge on who fixes it. Lawmakers want a categorical ban enforced from outside the product. OpenAI wants the product to police itself, with the user wiring in their own accountability circuit before a crisis.

Each path carries a cost the other doesn't. Legislation moves slowly, and a ban targeting one toy category leaves voice assistants, tablets, and chatbot apps untouched. Self-regulation is faster but self-interested: the company designing the safety net is the same one shipping the product, and toy manufacturers — many of them startups or licensors using third-party LLMs — have shown no equivalent appetite for building Trusted Contact-style features into a $40 plush.

The asymmetry matters because the children's-toy market is fragmented in a way ChatGPT is not. OpenAI can ship one feature to hundreds of millions of accounts in a day. A toy ban has to be passed state by state, and any company without OpenAI's safety budget has no incentive to volunteer.

The next regulatory test is whether any state ban passes before the holiday shopping season, when AI toys hit retail at scale.

03Tell Claude Code to output HTML and the prototypes start working. One developer's notes hit Hacker News.

A Twitter user posting as @trq212 published a piece this week titled "Using Claude Code: The unreasonable effectiveness of HTML." The claim is in the title: instructing the model to render its output as HTML, rather than as code or prose, reliably produces working prototypes. The argument cuts against conventional advice to ask coding agents for structured code output. Simon Willison reshared the post. Hacker News pushed it to 396 points and 232 comments.

The same week, a post on gowers.wordpress.com titled "A recent experience with ChatGPT 5.5 Pro" climbed to 579 points and 411 comments on the same site. Dated May 8, the entry documents one user's session with the model in the author's own area of expertise. The post sits on a personal blog, not a vendor channel or a benchmark site.

Both posts read as notebooks rather than reviews. Neither writer is selling a service or running a benchmark. They are practitioners showing their work: the prompts, the dead ends, the small constraints that made an unreliable tool useful. Most of the top replies on each thread come from other developers and researchers comparing their own sessions, not industry analysts.

A year ago, the most-discussed AI posts on Hacker News tended to be benchmark releases or model launches. This week, two of the highest-scoring AI submissions came from individuals writing up a single session in detail, with no product to pitch and no employer cited. One was a tweet thread on developer ergonomics; the other was a long-form personal blog.

The trq212 post argues that a format constraint, not a model upgrade, made the difference for prototyping work. For developers comparing Claude Code, Cursor, and other agent harnesses, the takeaway is that prompt-shaping may matter more this month than picking the strongest model.

Moonshot AI raises $2B at $20B valuation as ARR crosses $200M The Beijing-based startup behind Kimi reported its annualized recurring revenue topped $200 million in April, fueled by paid subscriptions and API usage. The round doubles its valuation amid surging demand for open-source Chinese models. techcrunch.com

Mira Murati's deposition details Altman ouster mechanics Witness testimony and trial exhibits in Musk v. Altman document what board members heard in the days before Sam Altman was fired in November 2023. The "not consistently candid" phrase now has specific incidents attached to it. theverge.com

Musk court filings show 2017 attempt to fold OpenAI founders into Tesla Documents from the same trial reveal Musk wanted the OpenAI team to run an AI unit inside Tesla, conditioned on him controlling the for-profit conversion. The founders rejected the terms. arstechnica.com

Mozilla credits Mythos with 271 Firefox vulnerabilities at near-zero false positive rate The browser maker says it has fully committed to AI-assisted bug discovery after running the tool against its codebase. The disclosure gives the first production-scale data point on AI vulnerability scanning accuracy outside the vendor's own benchmarks. arstechnica.com

Google adds source links throughout AI Overviews after publisher backlash Search results will cite sources in several new positions inside generated answers, partially reversing the single-citation design that publishers blamed for traffic collapse. The change affects ad-supported sites whose pages feed the summaries. arstechnica.com

Sony forecasts AI-driven flood of new game releases PlayStation executives told investors that cheaper production tools will increase the volume of titles competing for storefront slots. Sony added that human creative leads must remain in charge of projects, without specifying enforcement mechanisms. arstechnica.com

Tom Steyer proposes California jobs guarantee for AI-displaced workers The gubernatorial candidate's plan would commit the state to providing public employment to workers laid off due to automation. The proposal faces a steep path through the legislature and a tight 2026 primary. wired.com

Google launches screenless $100 Fitbit Air and folds Fitbit app into Google Health The new wearable drops the display in favor of voice and phone-based interaction, with preorders open today. Existing Fitbit users will migrate to the Google Health app, which absorbs sleep, exercise, and metrics tracking. arstechnica.com

Researchers demonstrate remote takeover of internet-connected robot lawn mower Security researchers found exploits letting attackers steer a popular consumer model across property lines without physical access. The disclosure adds to a growing list of yard-equipment vulnerabilities tied to cloud connectivity. wired.com

Paper proposes adaptive execution for robot world-action models Researchers reframe action execution as a verification problem, letting robots stop a planned sequence when imagined futures diverge from observed reality. Current World Action Models execute fixed action chunks blind to mid-rollout drift. huggingface.co