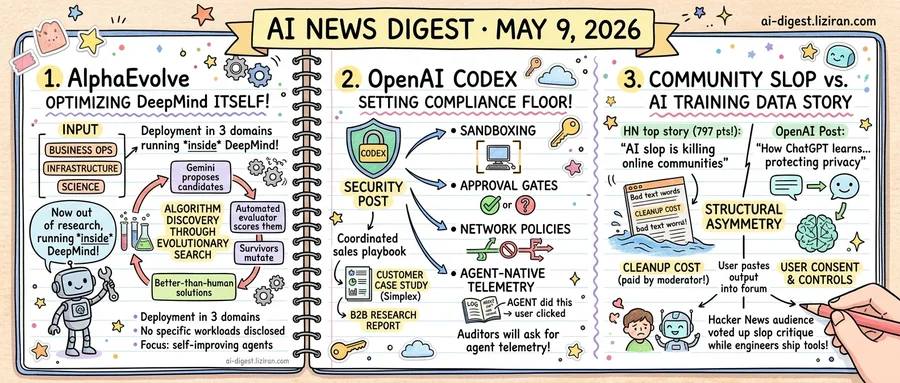

01AlphaEvolve, DeepMind's research agent, is now optimizing DeepMind itself

AlphaEvolve started as a Gemini-powered coding agent DeepMind described in research. This week the lab published its first multi-domain deployment ledger for the agent. The answer to "where does it run now" is: inside DeepMind itself, on three classes of problems the company is willing to name — business operations, infrastructure, and science.

The agent's job is algorithm discovery through evolutionary search. Gemini proposes candidate programs. An automated evaluator scores them. Survivors mutate. Over many rounds, the loop converges on solutions that outperform what human engineers wrote. That mechanism has not changed. The scope did.

DeepMind says AlphaEvolve is no longer confined to demonstration problems. Business processes inside the company are now optimized by the agent. Internal infrastructure code paths sit in its rewrite queue. Scientific problems, the third bucket DeepMind cites, are being attacked with the same evolutionary pipeline that produced earlier benchmark wins.

AlphaEvolve was previously framed as a research artifact. The new framing is a tool DeepMind already depends on, deployed against problems where a percentage point of efficiency translates into real spending. The company has not disclosed which workloads, or how much was saved on each.

DeepMind also did not name the engineering teams using the agent or the scientists running it on which problems. The post is structured as a coverage map rather than a results table, with the three domain buckets standing in for specifics.

The architectural direction is not unique to DeepMind. A separate paper this week, Skill1, proposes co-evolving an agent's skill selection, execution, and distillation under one reinforcement learning policy, on the premise that agents should accumulate reusable strategies rather than start cold each task. AlphaEvolve operates on programs rather than skills, but the trajectory tracks similarly toward agents that extend themselves and keep their gains.

DeepMind did not publish per-domain metrics or named workloads. There is no benchmark suite for "business operations optimized." The agent's wins inside Alphabet are not externally verifiable, and paper-grade rigor sits only in the science track.

Whether AlphaEvolve becomes available to outside customers, through Google Cloud or the Gemini API, is the next disclosure to watch.

02OpenAI's internal Codex security stack just became the compliance floor for coding agents

In one week, OpenAI published three documents that function as a coordinated sales playbook for coding agents inside regulated companies. The pieces were not labeled as a bundle: a security guide for running Codex internally, a customer case study, and a frontier-firms research report. Read in sequence, the bundle is hard to miss.

The security post lays out what OpenAI itself bolts onto Codex before letting it touch internal code. Four controls are named: sandboxing, approval gates, network policies, and agent-native telemetry. The first three are familiar from human-developer environments. Agent-native telemetry is the new one.

Agent-native telemetry means logs that track what an agent did, not what a user clicked. That is the data shape audit teams need when an autonomous process commits code, opens a pull request, or pulls a dependency. By naming it, OpenAI is implicitly setting the minimum auditors will ask for. When Codex shows up in a customer's SOC 2 or ISO scope, agent telemetry will be on the checklist.

The Simplex case study supplies the social-proof half of the package. OpenAI describes Simplex compressing design, build, and test cycles using ChatGPT Enterprise and Codex. The numbers in the post are self-reported by the customer. Specifics on incident rate, rollback frequency, or human review load are not disclosed.

The B2B Signals research adds the market frame. OpenAI says frontier enterprises are scaling agentic workflows faster than the median and presents Codex as the lever. The methodology, sample size, and definition of "frontier" are not detailed in the public post.

What enterprise security and procurement teams should read from this: OpenAI is now defining the floor for coding-agent compliance. That floor includes per-task sandboxes, network egress controls, human approval steps, and agent activity logs piped into existing SIEM pipelines. Buyers without those four controls in place cannot meaningfully evaluate Codex against alternatives. The ones who do face a follow-up question. Does OpenAI's telemetry schema map to an existing zero-trust audit chain, or is it a parallel vendor-defined log format?

03"AI slop is killing online communities" hit 797 points on Hacker News the same week OpenAI explained how ChatGPT learns from your conversations

A blog post titled "AI slop is killing online communities" collected 797 points and 692 comments on Hacker News this week. The post sits on a personal blog at rmoff.net, with no paywall and no push promotion. Developers ranked it among the site's top stories of the day.

The same week, OpenAI published "How ChatGPT learns about the world while protecting privacy." The post describes a system that ingests user conversations to improve its models, and frames the arrangement as a privacy story: data minimization, opt-outs, user controls. OpenAI says users can decide whether their chats are used to train future models.

The two posts sit on opposite ends of the same pipe. Online communities — forums, comment threads, technical Q&A boards — say they are drowning in AI-generated content of falling quality. AI companies, describing the same content stream from the input side, frame the relationship as one governed by user consent.

OpenAI's post does not address the community-side problem. It addresses what the model takes in: which data, under what controls, with what retention. What users post back into public forums after generating output sits outside that scope.

OpenAI's frame stops at the user's chat window. Conversations are owned by users, training opt-outs are theirs to set, retention rules belong to the account. What happens after a user pastes the model's output into a public thread is not a data flow the company's blog answers.

The asymmetry is structural. A forum moderator sees one user dump generated text into a thread. To the user, it was a private, low-stakes chat session. The AI vendor sees a training data question. Only the moderator pays the cleanup cost.

Hacker News voting patterns are not neutral data. The post got upvoted by an audience overlapping with the engineers who build, deploy, and pay for the tools that produce slop. The same crowd that ships these systems pushed an essay critical of them to the top of its own industry forum.

Cloudflare cuts 1,100 jobs and credits AI as the reason Cloudflare announced its first large-scale layoff while reporting record revenue. CEO Matthew Prince said AI efficiency gains removed the need for many support roles. techcrunch.com

Court filings show Microsoft feared OpenAI would defect to Amazon Documents from the Musk v. Altman trial reveal Satya Nadella and other Microsoft executives worried OpenAI might leave Azure for AWS during early partnership talks. The exhibits include private messages about Amazon courting Sam Altman. theverge.com

Intel stock jumps 490% as Wall Street prices in an AI comeback Intel shares have risen 490% over the past year on bets that its turnaround is real. The report argues the rally has run well ahead of the actual operational recovery. techcrunch.com

Anthropic's Mythos finds high-severity bugs in Firefox Mozilla security researchers said Anthropic's Mythos surfaced a wave of high-severity vulnerabilities in Firefox. The browser team has restructured how it triages and prioritizes security reports as a result. techcrunch.com

Sony tells investors AI is now part of how PlayStation makes games Sony's earnings presentation laid out specific points where it is evaluating generative AI in PlayStation game development. The framing matches a broader industry split between AAA studios adopting AI and indie developers refusing it. theverge.com

Oracle refuses to negotiate severance with laid-off workers Oracle declined severance talks with laid-off employees. Several found they had been classified as remote workers and excluded from WARN Act protections like 60-day advance notice. techcrunch.com

Basata builds AI agents to call medical specialists' fax machines Basata raised funding to automate the fax-based scheduling that bottlenecks US specialist referrals. Founders say the back-office staff they work alongside are too overwhelmed to worry about being displaced. techcrunch.com

Nanoleaf shifts product roadmap to robots, red light therapy, and AI Smart-lighting maker Nanoleaf explained its two-year quiet stretch as a pivot away from conventional lights. The company is now pursuing home robotics and wellness hardware with AI features. theverge.com

Google launches The Small Brief with three veteran ad creatives Google's new program pairs three ad industry veterans with small businesses to produce AI-assisted campaigns. The initiative positions Google's generative ad tools directly against major creative agencies. blog.google

OpenAI names 26 students to its ChatGPT Futures Class of 2026 OpenAI selected 26 students for its ChatGPT Futures program covering research projects and product work. The cohort anchors OpenAI's push to embed ChatGPT in education and early-career talent pipelines. openai.com