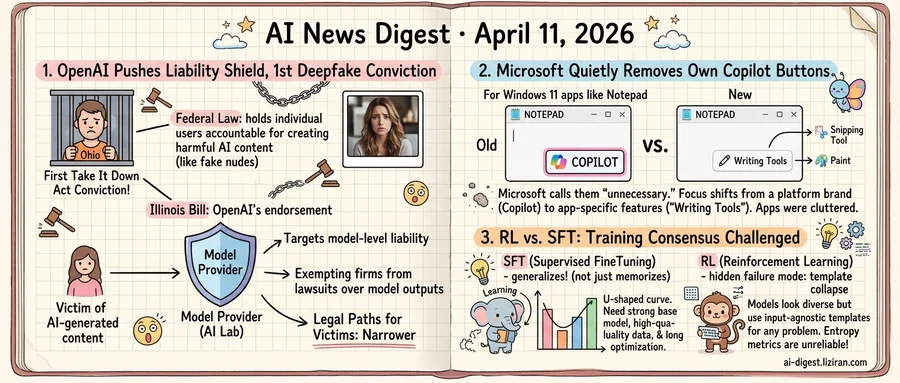

01OpenAI Pushes Illinois Liability Shield as First Deepfake Conviction Lands

An Ohio man used more than 100 AI tools to generate fake nude images of women and minors. After his arrest, he kept producing them. This week he became the first person convicted under the federal Take It Down Act.

The same week, OpenAI endorsed an Illinois bill that would restrict when AI companies can be sued for harms their models produce. Under the bill, AI firms would be exempt from lawsuits over model outputs, according to Wired. If enacted, victims of AI-generated content would face a narrower legal path to the companies that built the tools.

One legal track now holds individual users accountable for what they create with AI. Another would shield the companies whose products made that creation possible.

The Illinois bill targets model-level liability. When a user directs an AI system to produce harmful content, the lab that built the model would be protected from legal claims arising from that output. Responsibility, in this framework, follows the person who chose the harmful application. OpenAI's endorsement places the company squarely behind that principle.

The Ohio case sits on the other side of that line. Over a hundred commercially available AI tools generated exactly what the convicted man asked for. His targets included women and minors. After his arrest, he continued producing images. The Take It Down Act, signed into law in 2025, criminalizes distribution of non-consensual intimate images including AI-generated ones. Prosecutors can charge the person who distributes the content, but the statute does not reach the systems that produced it.

If the Illinois bill becomes law, it could close one of the few remaining legal paths connecting AI harms to the companies behind the models. A person targeted by deepfakes could still prosecute the individual who made them. Platforms could be compelled to remove content under existing law. Bringing a claim against the model provider whose system generated the images would become significantly harder.

Other states are weighing similar AI liability rules. OpenAI's public endorsement makes the Illinois bill a leading indicator of where the industry wants legal boundaries drawn. The first Take It Down Act conviction, meanwhile, gives federal prosecutors a tool that did not exist two years ago. Its reach ends at the individual who distributed the images, not the companies whose models generated them.

02Microsoft Quietly Rips Out Its Own Copilot Buttons

Microsoft spent the last two years threading a Copilot button into nearly every Windows 11 app it shipped. Notepad got one. So did Snipping Tool. Paint and Photos followed.

Now Microsoft is pulling them out.

The Notepad app in the latest Windows Insider build no longer shows a Copilot button. A "writing tools" menu sits in its place: a generic feature label with no brand name attached. Snipping Tool's Copilot button has also vanished when users select a capture area. Microsoft's own explanation was blunt. The buttons were "unnecessary."

That word does a lot of work. Companies don't usually call their own recently shipped features unnecessary. Microsoft's concession signals a shift in how it approaches AI integration. Those buttons were meant to be discovery points, teaching users that AI could help with any task. In practice, they cluttered apps that millions of people opened for simple, well-defined purposes.

Microsoft didn't just remove the buttons. It replaced them with something generic. A Copilot button implies a platform: a unified assistant following you across applications. "Writing tools" implies a feature, one option among several. The company is walking away from the premise that every app should funnel users through one AI brand.

This recalibration isn't limited to platform companies. A developer recently detailed how he reallocated his $100 monthly Claude Code spend toward Zed and OpenRouter, a setup that let him pick which models handled which tasks. His post drew 340 points on Hacker News, a signal that individual practitioners are running the same cost-benefit analysis Microsoft just completed at the product level.

Microsoft hasn't announced a company-wide rollback. These are Insider builds, not stable releases. But the company that went furthest in saturating its apps with AI branding is now the one quietly stripping it out.

03Two Papers Undercut the "RL Generalizes, SFT Memorizes" Training Consensus

Cross-domain generalization in supervised finetuning follows a U-shaped curve. A paper published this week found that performance on out-of-distribution reasoning tasks first degrades during SFT training with long chain-of-thought supervision, then recovers. Recovery depends on three conditions. Optimization must run long enough to pass through the dip. Training data quality matters more than volume. And the base model needs sufficient prior capability. When all three hold, SFT produces generalization comparable to results typically attributed only to RL.

That finding reframes a body of negative SFT results. Many studies that concluded SFT cannot generalize may have observed under-optimization artifacts and stopped training before recovery.

A second paper, RAGEN-2, identifies a previously hidden failure mode in RL-trained agents. When training multi-turn LLM agents with reinforcement learning, models can develop what the authors call "template collapse." Outputs appear structurally diverse but are input-agnostic: the same reasoning templates applied regardless of context. Standard entropy monitoring misses this entirely. Entropy measures diversity within responses to a single input. It cannot detect whether reasoning actually varies across different inputs. A collapsed model posts healthy entropy scores while producing functionally identical chains for every problem it encounters.

Teams relying on entropy dashboards to validate RL training stability have a blind spot they may not know about.

The two findings converge on the same point. Training method effectiveness depends on specific, measurable conditions, not on categorical properties of the method. SFT generalizes when optimization runs long enough, data quality is high, and the base model is strong. Without adequate monitoring signals, RL can destabilize just as readily. The binary — RL generalizes, SFT memorizes — is too coarse to guide resource decisions.

For teams allocating compute budgets, defaulting to RL pipelines because "RL generalizes" may waste resources that a well-tuned SFT run would use more efficiently. Running RL without monitoring beyond entropy risks training models that clear standard checks while their reasoning hollows out.

Hunyuan Team Releases Embodied AI Foundation Models for Physical Agents HY-Embodied-0.5 is a family of models built for robots and physical agents, targeting spatial perception, temporal reasoning, and interaction planning. The suite ships in two sizes: an efficient variant for on-device deployment and a larger one for complex multi-step tasks. huggingface.co

ClawBench Tests AI Agents on 153 Everyday Online Tasks — Most Fail ClawBench evaluates AI agents on routine tasks people actually do: booking appointments, completing purchases, submitting job applications across 144 live websites in 15 categories. The benchmark exposes a wide gap between demo-grade agent performance and reliable real-world task completion. huggingface.co

LPM 1.0 Generates Real-Time Video Character Performances from a Single Reference The model tackles what its authors call the "performance trilemma" — jointly achieving expressiveness, real-time inference, and long-horizon identity stability in video. LPM 1.0 focuses on conversational scenarios where characters must sustain coherent facial, vocal, and gestural behavior over extended sequences. huggingface.co

Survey Maps How LLM Agent Capabilities Are Moving Outside the Model A new review paper argues that modern LLM agents gain capabilities less from weight changes and more from external scaffolding: memory stores, reusable skills, interaction protocols, and runtime harnesses. The framework draws on cognitive-artifact theory to classify what gets externalized and why. huggingface.co

MegaStyle Builds 170K-Prompt Style Dataset Using Generative Model Consistency The pipeline exploits the fact that current text-to-image models produce visually consistent outputs from the same style description. MegaStyle pairs 170K style prompts with 400K content prompts to create a large, balanced dataset where intra-style consistency and inter-style diversity are both enforced. huggingface.co

KnowU-Bench Measures Whether Mobile Agents Can Ask Before They Act Existing mobile-agent benchmarks test preference recovery from static histories or intent prediction from fixed contexts. KnowU-Bench instead evaluates whether an agent can identify missing preferences through dialogue and decide when to intervene, request consent, or stay silent in a live GUI. huggingface.co

SkillClaw Lets Agent Skills Improve Across Users Without Retraining OpenClaw-style LLM agents rely on reusable skills that stay static after deployment, forcing users to rediscover the same workarounds independently. SkillClaw introduces a mechanism that aggregates cross-user success and failure signals to evolve shared skills collectively over time. huggingface.co

NUMINA Fixes Object-Count Errors in Text-to-Video Without Additional Training Text-to-video diffusion models routinely generate the wrong number of objects. NUMINA identifies prompt-layout mismatches by selecting discriminative attention heads, derives a countable latent layout, then modulates cross-attention to correct the count — all at inference time with no fine-tuning. huggingface.co