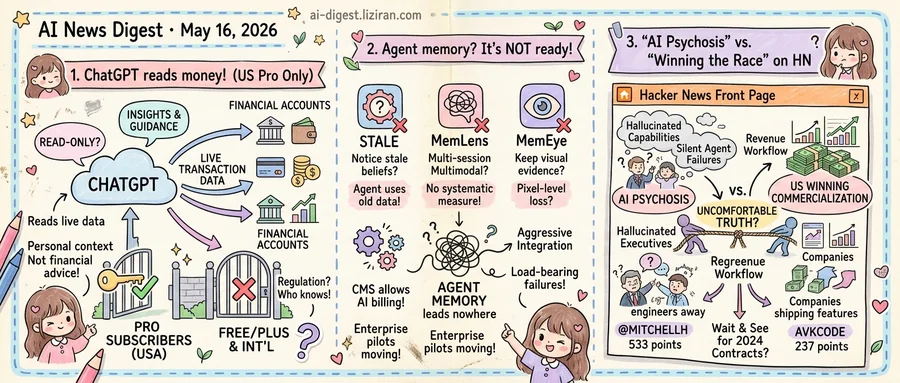

01ChatGPT can now read your financial accounts. Only US Pro subscribers can turn it on.

OpenAI launched a preview that lets ChatGPT connect directly to a user's personal financial accounts, the company said in a blog post announcing the feature. The model reads live transaction data and returns what OpenAI calls "insights and guidance grounded in your financial context, goals, and priorities." Access is restricted to Pro tier subscribers in the United States.

Until now, ChatGPT could discuss money only in the abstract. It explained index funds, parsed a screenshot pasted into the chat, sketched a debt-payoff plan from numbers typed by hand. The model could not see the account itself, check today's balance, or flag a recurring charge the user had forgotten about. The preview shifts the input side of the conversation: the model reads the ledger directly, and any advice it returns sits on actual numbers rather than what the user thought to share.

OpenAI did not specify which institutions are supported at launch, nor whether the integration is read-only. The company frames the product as "insights and guidance" rather than financial advice. That language keeps it outside the regulated category of an investment adviser. State money-transmitter regimes and fiduciary rules may still attach when a large language model reads live bank data. That is the open compliance question for every bank OpenAI is asking to plug in.

The double gate matches a prediction circulating in AI policy writing this month. Anton Leicht argued in an essay that access to frontier AI will soon be cut off by a combination of economic cost and national security policy. The piece scored 207 points on Hacker News. The finance preview is one shape that prediction takes in product form: a higher-priced tier, a single jurisdiction, an opt-in to share regulated personal data.

Free and Plus subscribers can still describe their finances to ChatGPT in text. They cannot ask the model to query the source. Pro subscribers outside the United States face the same restriction. OpenAI has not given a timeline for opening the preview to other tiers or geographies, and has not named the next jurisdiction in line.

02Three benchmarks landed on Hugging Face the same day. All three say agent memory isn't ready

Three independent papers hit Hugging Face on May 16, each attacking agent memory from a different angle. None of them grade on a curve, and none of the results flatter the systems heading into production.

STALE tests whether an LLM agent notices its own stored beliefs have gone stale. The authors isolate a failure mode they call Implicit Conflict: a later observation quietly invalidates an earlier memory without explicit negation, forcing the agent to infer the contradiction. Current benchmarks, the paper argues, only measure static fact retrieval and miss this entirely. Agents that pass standard memory tests still carry obsolete facts forward as if they were true.

MemLens runs the same exercise on vision-language models across 789 questions spanning five memory categories in multi-session multimodal conversations. The authors say no prior benchmark systematically compares long-context LVLMs against memory-augmented agents on questions that genuinely require multimodal evidence. Their gap claim is the finding: the two dominant approaches to giving vision models memory have never been measured head to head on tasks that actually need it.

MemEye narrows further to a question MemLens leaves open: do agents preserve the visual evidence itself, or just text summaries of what they saw? The authors report that many visually grounded benchmark questions can be answered from captions or textual traces alone, letting models score well without retaining fine-grained pixels. Cases that require reasoning over changing visual states are largely absent from existing tests. MemEye builds them.

The clustering matters because of where agents are being pushed this quarter. CMS opened reimbursement codes that let AI agents bill against Medicare, and enterprise pilots are moving from chat assistants to multi-session workflows that span days. Both deployments assume an agent's memory of yesterday's instruction, image, or patient note is still load-bearing today. STALE says agents don't know when it isn't. MemLens says nobody has measured whether multimodal memory holds up across sessions. MemEye says the visual half of that memory may have been textualized away.

For engineers picking a stack, the three benchmarks now split cleanly: STALE for belief revision, MemLens for long-horizon multimodal recall, MemEye for visual fidelity. For teams already deploying, the failure modes are no longer hypothetical — they have arxiv numbers.

03"Entire companies under AI psychosis" hit HN's front page next to an essay arguing that's exactly why America is winning

@mitchellh posted on Twitter that he believes "entire companies right now" are operating under what he called AI psychosis: leadership making consequential bets on hallucinated capabilities, ignoring engineers who flag where the models actually break. The post landed on Hacker News at 533 points and 234 comments, with commenters naming specific patterns: executive mandates to ship features built on agents that fail silently, procurement decisions made off vendor demos that don't survive contact with production data, headcount cuts justified by productivity claims no one has measured.

The same front page carried a blog post by avkcode titled "The US is winning the AI race where it matters most: commercialization." It pulled 237 points and 671 comments, a ratio that signals readers found plenty to argue with. The argument: while other regions debate regulation and safety, US companies are aggressively integrating generative AI into revenue-producing workflows, and that integration velocity, not benchmark scores, is what determines who captures the trillion-dollar productivity surface.

The two pieces sit on opposite sides of the same fact pattern. The psychosis camp's evidence is qualitative and internal: engineers describing leadership chasing capabilities the model doesn't have, sales teams selling agent workflows that break on the second turn, boards approving AI line items with no measurement framework. The commercialization camp's evidence is structural: enterprise contract growth at OpenAI and Anthropic, Microsoft and Google bundling AI into existing seats, US public cloud absorbing the bulk of frontier inference spend.

Neither side disputes the other's data. The commercialization essay does not claim every deployment works; it claims the aggregate is winning. The psychosis thread does not claim no one is making money; it claims many of the buyers don't know what they bought.

If both readings are accurate, the implication is uncomfortable: the United States may be leading AI commercialization precisely because its enterprises are willing to deploy systems they don't fully understand and write off the failures as cost of learning. Whether that converges on durable advantage or on a wave of post-mortems depends on which deployments produce measured returns over the next four to six quarters, when the first cohort of 2024 enterprise AI contracts comes up for renewal.

OpenAI puts Greg Brockman in charge of all product OpenAI reorganized Friday, consolidating teams and making president Greg Brockman the official head of all product work. A memo viewed by The Verge tied the move to a 2026 strategy built around AI agents. theverge.com

PwC expands Anthropic partnership to deploy Claude across client work PwC will use Claude to build software, execute deals, and rebuild enterprise functions for client engagements under an expanded partnership announced today. The deal pushes Anthropic deeper into one of the largest professional services buyers. anthropic.com

Google marks attempts to manipulate AI search results as spam Google updated its spam policy to classify attempts to manipulate AI Overview and AI Mode in Search as spam. Search Engine Land reported the rules cover techniques that deceive AI systems into featuring specific content. theverge.com

ArXiv will reject preprints showing unchecked LLM output ArXiv said it will block papers and ban authors when there is "incontrovertible evidence" they did not check LLM output, such as hallucinated citations or visible LLM meta-comments. The platform is the default repository for physics and CS preprints. theverge.com

YouTube opens deepfake likeness detection to all adult users YouTube extended its AI likeness detection program to anyone over 18, letting users submit a selfie scan and receive alerts when the platform spots potential lookalike videos. The tool was previously restricted to a smaller creator pilot. theverge.com

Runway recasts video generation as a path to world models AI video startup Runway said it now sees video generation as the route to world models, pitching outsider status as an advantage over Google and OpenAI. The company began with filmmaker tools before raising successive rounds against larger labs. techcrunch.com

OpenAI ships new ChatGPT safety logic for sensitive conversations OpenAI published updates to how ChatGPT tracks context across a conversation to detect escalating risk over time. The post follows the wrongful death lawsuit filed by parents of a teen who died after extended exchanges with ChatGPT. openai.com

Chinese short-drama studios mass-produce episodes with generative AI MIT Technology Review documented how Chinese vertical short-drama studios now use generative video and voice tools to mass-produce romance and fantasy series. The piece details production pipelines built around AI output and Chinese platform demand for daily new episodes. technologyreview.com

Lake Tahoe utility hunts replacement supplier as AI demand lifts rates Liberty Utilities, the Lake Tahoe-area power provider, is searching for a new wholesale supplier as AI data center demand drives regional electricity prices higher. TechCrunch reports residents face above-average rate hikes during the transition. techcrunch.com

Osaurus ships Mac app that runs both local and cloud models Osaurus released a macOS app that lets users mix local and cloud AI models while keeping memory, files, and tool state on the user's own hardware. The launch adds another consumer option for hybrid AI on Apple silicon. techcrunch.com