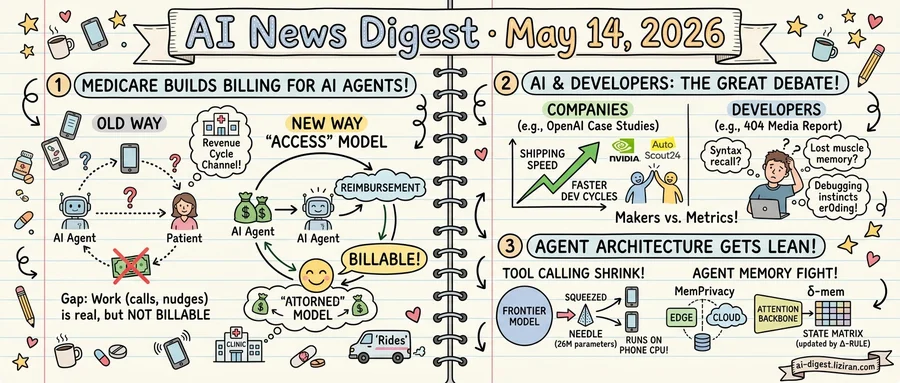

01Medicare just built the first billing channel for AI agents that work between doctor visits

Until last week, no part of the U.S. government could pay a software agent for the work between doctor visits. That work is real: calling a patient, nudging them to pick up a prescription, coordinating a housing referral. None of it generates a billable encounter inside chronic care. The work existed; the reimbursement did not.

That gap is the one Medicare's new payment model, ACCESS, was built to close. According to TechCrunch, ACCESS is the first federal mechanism that pays for between-visit care delivered by AI agents — monitoring, check-ins, medication follow-up, social-needs referrals. The model targets the work that sits between appointments, where chronic patients drift and where most health-tech demos have died for lack of a buyer.

The commercial deadlock it removes is older than the current AI cycle. Healthcare AI vendors have spent years pitching agentic products to providers who agreed they were useful but could not bill for them. Without a billable code, even a working agent had no place in a clinic's revenue cycle. ACCESS gives that channel a name.

Medicare is the largest single payer in the United States. ACCESS opens its book of business to software performing care coordination rather than diagnosis or imaging. The agents covered are unglamorous: they make calls, watch vitals, and arrange rides. They look nothing like the foundation-model launches that dominate AI news cycles.

That news cycle is busy elsewhere. The same week ACCESS came online, Anthropic announced Claude for Small Business, pitching its model to a fragmented SMB market. Medicare's annual outlays dwarf the SMB software opportunity many times over, and ACCESS routes a slice of those outlays to agentic software for the first time. TechCrunch reports most of the tech world has not registered that the door opened.

The companies positioned to collect are mostly not the ones in this week's AI headlines. They are clinical-workflow vendors with hospital relationships and CMS familiarity — names rarely tracked by AI investors. The faster a frontier-model lab licenses through one of them, the faster its agents become billable inside U.S. healthcare. That window opens before the rest of the industry notices it exists.

02Developers say AI is rotting their brains, the same week OpenAI showcased Codex case studies

OpenAI this week published two customer stories celebrating how engineering teams ship faster with Codex. NVIDIA researchers, per the writeup, use Codex with GPT-5.5 to turn research ideas into runnable experiments and ship production systems. AutoScout24 Group credits the tool with speeding development cycles and broadening AI adoption across its engineering org.

The same week, 404 Media published interviews with developers using these same tools. Their verdict: "It's making me dumber for sure."

The 404 Media report compiled posts from Hacker News and developer forums where engineers described specific capabilities atrophying. Some said they could no longer recall syntax they had written daily for years. Others described losing the muscle memory for writing code from scratch, reaching for the AI before forming their own mental model. Debugging instincts, several said, were eroding because they no longer sat with broken code long enough to understand why it broke.

The vendor case studies measure a different axis. OpenAI's NVIDIA writeup tracks shipped systems and experiments converted from research. The AutoScout24 piece reports development cycle speed and code quality improvements. Neither piece reports on whether engineers using Codex retain the ability to work without it.

That gap matters for the people deciding whether to roll these tools out. The case studies tell an engineering manager that adoption produces more shipped features per quarter. They do not address whether, after twelve months, the same engineers can still pass the take-home interview that got them hired.

Developers in the 404 Media report were not uniformly negative. Several said AI tools had made them more productive on tasks they already understood. The complaints clustered around long-term skill retention, not short-term throughput. The vendor metrics and the developer self-reports are measuring different variables; only one of them tracks what happens inside the engineer.

03Tool calling shrinks to 26M parameters as two papers attack agent memory

Cactus Compute released Needle this week, a 26-million-parameter model distilled from Gemini specifically for tool calling. The GitHub repository drew 630 points on Hacker News with 181 comments. At that size, the model runs on a phone CPU.

Tool calling is the part of an agent that turns user intent into structured API calls. Developers typically route this work to frontier models because smaller ones hallucinate function signatures. Needle's claim is that the routing decision does not need a general-purpose model, just one trained narrowly on schema matching. The team distilled Gemini's tool-calling behavior into the smaller network rather than training from scratch.

Two papers posted to Hugging Face apply the same compression logic to the other expensive piece of an agent: memory. MemPrivacy proposes splitting personalized memory between edge and cloud, keeping task-sensitive semantics on-device while letting the cloud handle generic retrieval. The authors frame the design as a privacy fix. The architecture also cuts the per-query cloud cost of long-running personal agents.

δ-mem takes a different cut at the same bill. Rather than expanding the context window every turn, it maintains a fixed-size state matrix updated by a delta-rule. The matrix attaches to a frozen attention backbone. The paper reports its readout matches longer-context baselines at a fraction of the per-token spend.

The three projects do not share a research lineage. They converge on the same architectural assumption: that the parts of an agent currently sitting inside a frontier-model API call can be pulled apart and pushed down the stack. Needle attacks function routing, MemPrivacy attacks data residency, δ-mem attacks the context window.

For developers building agents without committing to a single cloud vendor, the option set widened by three. Whether any individual project ships in production matters less than the architectural drift: the assumption that an agent must be a thin client wrapping a frontier API is no longer the only viable path.

Anthropic passes OpenAI in paid business customers Ramp's expense data from its client base shows 34.4% of surveyed businesses now pay for Anthropic services, against 32.3% for OpenAI. Ramp's sample skews to startups and mid-market firms, so the gap reflects buying patterns outside the Fortune 500. techcrunch.com

xAI's Mississippi data center sued over 50 unpermitted gas turbines A lawsuit targets xAI's Colossus 2 site for running roughly 50 "mobile" gas turbines as a primary power plant without the air permits required for stationary generation. The case tests whether AI operators can use mobile-classification loopholes to bring data centers online ahead of grid hookups. techcrunch.com

Anthropic launches a small-business tier aimed at 36 million U.S. firms Anthropic released an offering pitched at owners of small companies rather than enterprise buyers or developers. It's the first time a major lab has built distribution specifically for the SMB segment, where OpenAI and Google have so far relied on generic consumer or enterprise plans. techcrunch.com

Notion opens its workspace to third-party AI agents A new developer platform lets teams wire external agents, data sources, and custom code directly into Notion pages and databases. The product positions Notion as a host surface for agents rather than a destination app, competing with Slack and Microsoft 365 for that role. techcrunch.com

Amazon puts Alexa+ in the search bar Alexa for Shopping replaces the keyword search box on Amazon mobile, desktop, and Echo Show with a voice and chat agent that can compare items and place orders across Amazon and third-party retailers. The change exposes Alexa+ to every Amazon shopper without a separate download. techcrunch.com

Microsoft Edge Copilot reads across every open tab A new Edge update lets Copilot pull and compare content from all tabs in a session, including product pages and articles, when answering a query. Users grant access at conversation start; Microsoft has not detailed what tab content is sent to its servers or retained. theverge.com

Meta ships Incognito Chat for AI without server logs Zuckerberg announced a Meta AI mode that does not save conversations to servers or user history. Meta frames it as the first major AI product with no stored logs, though the company has not published a technical attestation or third-party audit of the claim. theverge.com

Google AI hands out real personal phone numbers in chat answers Users report that Google's AI surfaces their direct phone numbers and contact info when other users ask unrelated questions, with no documented removal process. One affected person says he has spent a month fielding misdirected calls intended for lawyers and designers. technologyreview.com

Origin Lab raises $8M to broker game data for world models The startup runs a marketplace where video game studios license gameplay data to AI labs building world models. It targets a supply gap as video pretraining hits a ceiling on public web footage and labs look for licensed physics-grounded data. techcrunch.com

DeepMind proposes redesigning the mouse pointer for AI use A DeepMind post argues the cursor should carry intent and context so agents can act with users rather than after them, sketching an interaction model where pointer state becomes a shared signal between human and assistant. The piece is design research, not a shipping product. deepmind.google

World Action Models paper proposes pairing VLAs with environment predictors Researchers outline World Action Models, embodied foundation models that combine vision-language-action policies with explicit predictors of how the environment changes under each action. The framing targets a known weakness in current robotics policies, which map observations to actions reactively without forward simulation. huggingface.co