01A Christian Phone Network Will Block Porn Next Week. The Same Audience Is Buying AI Bible Videos on Fiverr for $5.

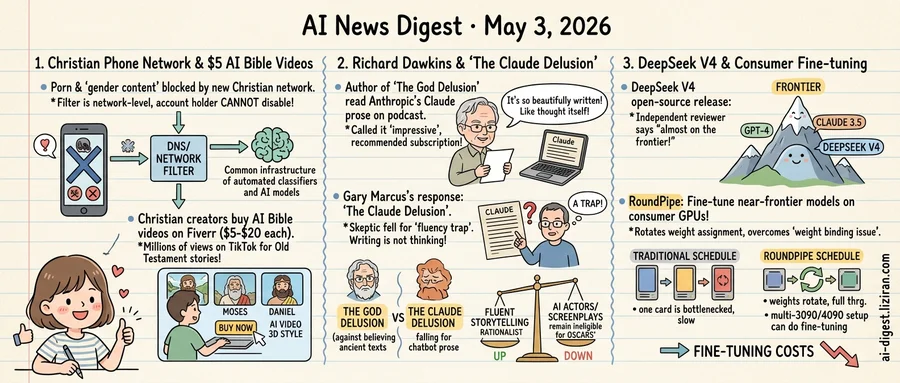

A new US cell phone network marketed to Christians launches next week with porn blocked at the network level — the first American carrier to do so in a way the account holder cannot turn off, according to MIT Technology Review. The same filter also catches "gender-related content," a category the operator has not fully defined.

Network-security experts told the publication that this represents a precedent for consumer telecom in the United States. Existing parental-control products on T-Mobile, Verizon, and AT&T require an account owner to enable filtering and let them disable it. The Christian carrier removes that switch. Filtering happens at the DNS and network layers before traffic reaches the phone.

While one corner of the same demographic is paying to wall content off, another is paying to flood it in. The Verge reported this week that Christian content creators are commissioning AI-generated Bible videos from Fiverr freelancers for $5 to $20 a piece. The freelancers' profiles advertise turnaround in hours, with output measured in clips per day.

The clips run on TikTok under accounts framed as Christian ministry. One format animates Old Testament scenes such as Noah, Moses, and Daniel in a glossy 3D style that draws millions of views. Buyers told The Verge they treat the videos as evangelism, not entertainment. Some accounts post several pieces of AI content per day, faster than a human animator could produce a single scene.

The infrastructure on both ends comes from the same generation of tools. Network filtering depends on automated category classification of domains. The Fiverr Bible factories depend on text-to-video and text-to-image models that did not exist in consumer form two years ago. Both pipelines are sold to a religiously conservative audience with the same promise: remove human judgment from the loop, whether to keep impurity out or to keep output up.

Neither side has published the rules its software follows. The Christian carrier has not released the domain list its "gender-related content" filter blocks. Fiverr sellers are not required to disclose which models they use. The carrier launches next week; the Bible accounts are already live.

02The God Delusion author read Claude's writing on his podcast, then told listeners to subscribe

Richard Dawkins, 84, spent four decades teaching readers that fluent storytelling is not evidence of truth. On a recent episode of his podcast, the evolutionary biologist read aloud several passages Anthropic's Claude had written for him. He called the prose impressive and recommended his audience pay for a subscription.

Gary Marcus, the cognitive scientist who has built a second career criticizing large language model hype, published a response on Substack titled "Richard Dawkins and The Claude Delusion." The title is a deliberate echo of Dawkins's 2006 bestseller "The God Delusion," which argued that emotional resonance and beautiful language are not proof of an underlying intelligence. Marcus's point: the author who codified that warning had just fallen for its software equivalent.

Marcus's argument is narrow. He does not claim Claude is bad at writing. The writing itself, he says, is the trap. A skeptic, of all people, should recognize that producing paragraphs that sound like thought is not the same as thinking. That was the entire premise of the New Atheist case against scripture. Dawkins read passages, found them moving, and treated that response as data about the system that produced them.

Anthropic did not solicit the endorsement, and Dawkins is not a paid spokesman. The episode is a clean test case: a hostile witness, no commercial incentive, decades of training in skeptical method, persuaded by a few hundred words of model output.

The same week Dawkins was reading Claude aloud, the Academy of Motion Picture Arts and Sciences moved in the opposite direction. New eligibility rules announced this week make AI-generated actors and AI-written screenplays ineligible for Oscars consideration, a categorical exclusion with no quality threshold attached. One of the world's most prominent rationalists was being persuaded by a chatbot's prose. At the same moment, one of entertainment's most prominent institutions decided no chatbot output would count, regardless of how good it was.

03DeepSeek V4 lands almost at the frontier, and a paper makes the same tier fine-tunable on a 3090

Two open-source releases dropped the same week, and together they compress the cost of running and tuning a near-frontier model at both ends.

DeepSeek released V4. Independent reviewer Simon Willison evaluated it as "almost on the frontier." The remaining gap to GPT-class and Claude-class systems no longer determines outcomes on many production workloads. The model weights are open. A team running it on owned hardware pays compute, not a per-token API margin.

That removes half of the closed-stack lock-in. The other half is fine-tuning, which has historically required rented H100-class hardware. Consumer GPUs hit two walls: limited VRAM, and slow PCIe interconnect between cards. Pipeline parallelism with CPU offload helps with both. But existing schedules run into what the RoundPipe paper calls the "weight binding issue." Uneven model stages get pinned to specific GPUs, and throughput is then capped by the most overloaded one. The LM head, being disproportionately large, is the typical bottleneck.

RoundPipe, posted to Hugging Face this week, proposes a schedule that decouples weight assignment from physical GPU placement and rotates responsibility through the pipeline. The paper claims multi-3090 and multi-4090 setups can hit fine-tuning throughput previously available only on data-center hardware. Combined with CPU offload, the practical claim is that fine-tuning a near-frontier-class open model no longer requires renting an 8xH100 node by the hour.

The two cost curves were previously coupled. A team that wanted a custom fine-tune of a competitive model had to rent training hardware to produce it, then keep renting for inference or pay an API. With V4 weights and a consumer-GPU training path, that same workload can in principle live entirely on owned hardware. Existing 3090 and 4090 inventory becomes capital that runs both stages, not just one. Whether RoundPipe's reported numbers hold up outside the paper's setup will determine if the path is real or theoretical.

Google ships Gemini to cars with Google built-in Google began rolling out Gemini as a replacement for Google Assistant in vehicles running Google built-in. The update handles natural conversation, vehicle-specific queries, and in-car settings adjustments. theverge.com

Apple Mac Mini sells out for months as Cook cites AI demand Tim Cook told analysts on the earnings call that AI adoption ran ahead of Apple's forecasts, leaving Mac Mini supply gone for several months. The M-series desktop has become a default local-inference box for developers. wired.com

VS Code adds Copilot co-author tag to commits not touched by Copilot A Microsoft pull request inserts "Co-Authored-by Copilot" into Git commits in VS Code regardless of whether the user invoked Copilot. Developers on the thread flagged it as polluting attribution and inflating Copilot usage metrics. github.com

Disneyland turns on face recognition at the gate Disneyland began using face recognition to identify visitors, expanding biometric capture beyond the fingerprint scans the parks have used for years. The same week, NSA started testing Anthropic's Mythos Preview for vulnerability discovery. wired.com

Ars Technica maps Gemini's privacy defaults Ars walked through Gemini's account settings and found defaults that route chats, voice, and uploaded files into Google's training pipeline unless users dig into nested toggles. Turning data sharing off in one product does not propagate to others. arstechnica.com

Trial filings detail Shivon Zilis as Musk's OpenAI back-channel Court exhibits in Musk v. Altman show Shivon Zilis relayed messages between Musk and OpenAI leadership for years, including during the 2018 board exit. Zilis is a Neuralink executive and the mother of four of Musk's children. wired.com

California water researchers put AI consumption below public estimates A California water blog post argues AI training and inference draw far less water than viral figures suggest, and that agricultural and cooling losses dominate the state's water budget. The post recommends regulators target measured datacenter draw, not headline-per-prompt math. californiawaterblog.com

Researchers use AI to rebuild the ribosome around 19 amino acids A team applied protein-design models to redesign ribosomal components so translation can proceed without one of the standard 20 amino acids. The freed codon could be reassigned to non-natural amino acids for industrial protein synthesis. arstechnica.com

TechCrunch ranks AI dictation apps for 2026 TechCrunch tested current dictation apps across email, note-taking, and voice-driven coding, scoring accuracy, latency, and integration with editors. The piece is a buyer's guide aimed at developers replacing keyboard input with voice. techcrunch.com

BioticsAI founder details FDA path for clinical AI On TechCrunch's Build Mode, BioticsAI CEO Robhy Bustami walked through how the company secured FDA clearance and structured fundraising around regulatory milestones. Bustami described keeping engineering velocity while clinical evidence work blocked release dates. techcrunch.com

Co-Evolving Policy Distillation paper targets multi-skill post-training collapse The paper analyzes why mixed RLVR loses capabilities across skills and why sequential expert-then-distill pipelines fail to transfer teacher behavior. The authors propose co-evolving the student and expert policies during distillation to close the behavioral gap. huggingface.co