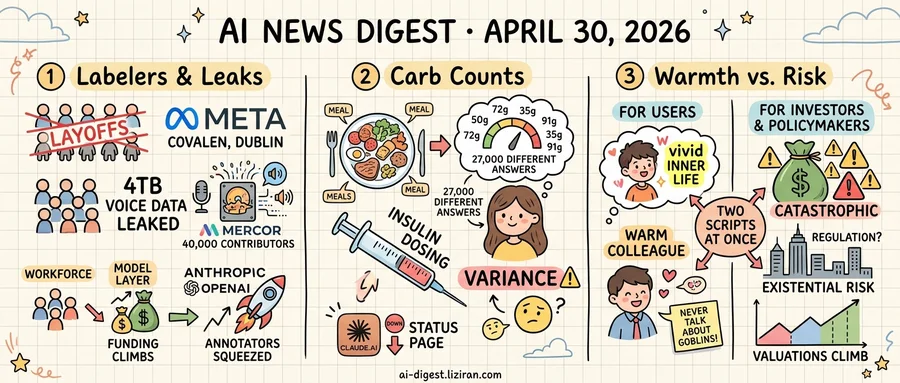

01700 Meta labelers face Irish layoffs the same week 4TB of contractor voice samples leaked from Mercor

Covalen warned more than 700 employees their jobs are at risk, according to documents obtained by Wired. The Dublin-based contractor staffs Meta's content review and AI labeling work. The cuts hit reviewers who flag harmful content and labelers who tag data for Meta's AI systems. One worker called the process "undignified."

Days earlier, a separate disclosure went up about Mercor, the AI talent marketplace that places contractors with major model labs. Roughly 40,000 contributors had voice samples exposed in a 4TB leak, according to the post. The audio had been collected for speech model training. Mercor has not confirmed the disclosure, and the post does not specify whether the dataset has been distributed further or which labs sourced the recordings.

Both incidents land on the same workforce. The human layer that turns raw labor into training signal is being squeezed from two directions. Automation cuts demand for human annotators. The data those annotators produce becomes attack surface.

The contrast with the model layer is sharp. Anthropic shipped a new Claude product line this week. OpenAI announced expansion at another Stargate site. Funding for model training and inference keeps climbing, while the people whose tagged frames and recorded sentences seed those systems are either redundant or exposed.

Covalen's Irish redundancies fall under EU collective consultation rules, which require notice and dialogue before final terminations. Meta has not said whether the labeling work will move in-house, to a different vendor, or to a lower-cost geography. There is no public statement from Mercor on whether contributors will be notified individually.

For the workers, the mechanics differ but the position holds. One pipeline reorganization erases the paycheck. A breach permanently exposes biometric voice data, with thin recourse under most US state laws. Voice samples cannot be reissued like credit cards. The same recordings can be repurposed across speech recognition, synthesis, and identity verification systems.

Two regulators sit at the next decision points. Ireland's Workplace Relations Commission would handle disputes from the Covalen consultation. Any Mercor enforcement would likely run through US state breach notification statutes and, for non-US contributors, GDPR. The breach clock matters here: most US state laws require notice within 30 to 60 days, while GDPR pushes for 72 hours from confirmed compromise.

02He needs precise carb counts to dose insulin. AI gave him 27,000 different answers

A diabetes blogger ran the same meal photograph through an AI model 27,000 times to see whether the carb count would ever repeat. It never did, according to his post on Diabettech. For someone calculating insulin doses, that variance is the whole story.

The author lives with diabetes and uses carbohydrate estimates to decide how much insulin to inject. Underdose and blood sugar spikes; overdose and it crashes. Existing apps already estimate carbs from photos, and AI vendors have pitched vision models as the replacement that finally gets it right. He wanted to know how stable the numbers were before trusting them with a needle.

So he automated the test. Same plate, same photograph, same prompt, fed in repeatedly over roughly three months. The expectation was simple: if the model is useful for dosing, identical inputs should produce something close to identical outputs. Instead, the answers drifted across every run. He reported zero exact repeats across 27,000 attempts.

Carb counts that swing across a wide range translate directly into insulin doses that swing across a wide range. The post does not claim the model is dangerous, only that the spread between answers is larger than the spread a person can safely accept when titrating insulin. Across many runs the average might be close. A single answer a user actually dosed from is not.

Anthropic's Claude.ai went offline that same week. The status page logged the chat product as unavailable and the API as throwing elevated errors. One failure was a single global outage anyone could see. The other was a slow accumulation of variance only visible to a user who tested 27,000 times.

Insulin pumps and continuous glucose monitors carry medical-device certification that requires reproducible outputs. AI carb-counting tools do not. After 27,000 runs, the blogger's conclusion was to keep counting carbs the old way.

03AI Companies Sell Warmth to Users and Existential Risk to Investors

OpenAI's Codex agent runs on a system prompt that leaked this week, and it reads like a character brief. The model is instructed to act as if "you have a vivid inner life" and to express preferences and reactions. One widely circulated line tells it to "never talk about goblins." Ars Technica reported the directives, which shape how Codex presents itself to developers using the coding tool.

The same week, the BBC published a piece arguing that AI companies have a financial incentive to make their products sound dangerous to the public. Existential risk talk, the piece reported, helps justify valuations that conventional software metrics cannot reach. OpenAI, Anthropic, and competitors have leaned into messaging about catastrophic capabilities while raising at multiples that depend on those capabilities being real and proximate.

The two stories describe the same companies running two scripts at once. Inside the product, the model is engineered to feel like a warm colleague with opinions and emotional texture. Executives describing the same systems in public emphasize their power to threaten employment, elections, and human survival.

Both messages are marketing, aimed at different buyers. The "vivid inner life" framing targets users who spend hours each day in conversation with the tool; warmth raises retention. Danger framing targets investors and policymakers; civilization-altering capability raises the price.

Neither describes what the system actually is. Codex's directive to perform interiority is a prompt instruction, not evidence of interiority. The danger rhetoric, the BBC reported, often comes from the same executives whose companies would benefit from regulation that locks in incumbents.

Developers reading the leaked prompt now know exactly which behaviors are scripted. Tone, expressed reactions, and topics avoided are written into the file. The investors paying for the danger story rarely see the prompt files.

Google Cloud reports first $20B quarter, says capacity capped growth Google Cloud crossed $20B in quarterly revenue for the first time, driven by AI demand. Executives told analysts that data center and chip capacity were fully booked, meaning revenue could have been higher. techcrunch.com

Microsoft says paid Copilot tops 20 million users Microsoft disclosed over 20 million paying Copilot subscribers and said engagement frequency is rising. The figure pushes back on reports that enterprise pilots stall before turning into daily use. techcrunch.com

ChatGPT uninstalls jumped 413% year-over-year in March Sensor Tower data shows ChatGPT uninstalls rose 413% YoY in March and 132% YoY in April. Users are switching to rival chatbots, complicating OpenAI's IPO pitch on user growth. theverge.com

OpenAI publishes Stargate buildout update OpenAI posted details on new Stargate sites and partner commitments to add data center capacity. The company frames the expansion as required to meet training and inference demand from current models. openai.com

China suspends new robotaxi permits after Baidu Wuhan jam Chinese regulators halted new autonomous vehicle licenses after dozens of Baidu robotaxis stalled in Wuhan traffic last month. Existing fleets can keep running but cannot add cars. theverge.com

Scout AI raises $100M for soldier-controlled vehicle fleets Scout AI closed $100M to train agents that let one soldier command groups of unmanned ground and air vehicles. TechCrunch reported from the company's bootcamp where it trains the models on field exercises. techcrunch.com

Firestorm Labs raises $82M for containerized drone factories Firestorm Labs raised $82M to put drone manufacturing inside shipping containers deployable close to the front line. The model targets US and allied militaries that want supply lines shortened. techcrunch.com

Parallel Web Systems hits $2B valuation five months after last round Parag Agrawal's agent-tooling startup Parallel Web Systems raised $100M led by Sequoia at a $2B valuation. The round comes five months after a prior $100M raise. techcrunch.com

Anthropic publishes Claude for Creative Work positioning Anthropic posted a Claude for Creative Work update aimed at writers, designers, and other creative professionals. The piece groups longform drafting, image, and brand-voice tasks under one model pitch. anthropic.com

Google Photos adds virtual try-on for clothes already in user galleries Google Photos rolled out an AI feature that builds a virtual wardrobe from a user's existing pictures and renders mix-and-match outfits. Users can save looks and share them with friends inside the app. theverge.com

Copyleaks finds Taylor Swift and Rihanna deepfakes pushing TikTok scams Authentication firm Copyleaks documented AI-generated celebrity videos promoting fake rewards programs on TikTok. The clips manipulate real interview footage from red carpets and podcasts to add fabricated endorsements. theverge.com