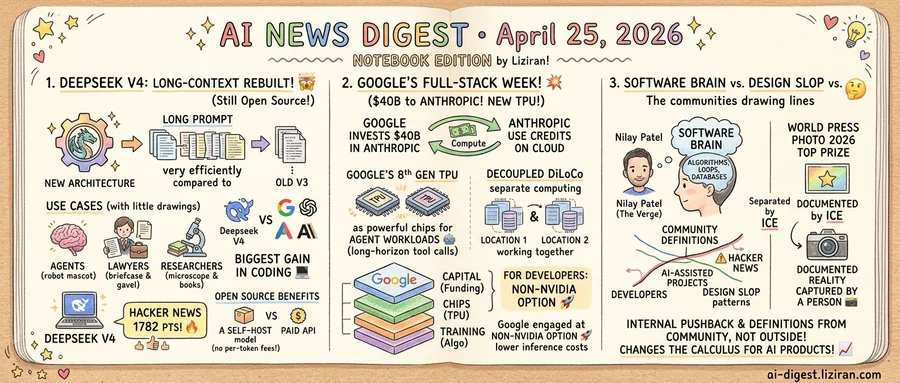

01DeepSeek previews V4 with a rebuilt long-context architecture, still open source

On Friday, the Chinese lab posted V4 to its API docs without a launch event or marketing blitz. What went live is a preview of a new flagship. The most consequential change sits in the model's foundation: a redesigned architecture that processes much longer prompts more efficiently than V3, according to MIT Technology Review.

Context length has become the pinch point for code and document work. Longer prompts mean agents can keep more of a repository in memory, lawyers can feed longer filings, and researchers can work across papers without chunking. DeepSeek has not published full benchmark details for V4. The company says V4 competes with closed systems from Anthropic, Google, and OpenAI. Coding is the area of biggest gain, according to The Verge.

The preview label is load-bearing. V4 is not generally available as a stable release; developers are testing an early build against the API while DeepSeek iterates. A year ago V3 drew a different kind of attention, rattling US equities because it hit frontier performance on a fraction of the reported training budget. V4 arrives into a market that now expects cheap, capable Chinese releases rather than being surprised by them.

Developer interest is concentrated. The V4 thread on Hacker News crossed 1,782 points and 1,388 comments within a day, among the busiest discussions on the site this month. Commenters focused on practical questions: how the new attention scheme handles long documents, whether the preview is reproducible, and whether weights follow the same open license as V3.

Like V3, V4 is open source. A lab with GPUs can self-host the model rather than paying per-token rates to Anthropic or OpenAI. Teams running code review, document QA, or agent workloads at high volume face a different equation. The build-versus-buy math tilts away from hosted APIs whose frontier pricing has moved up, not down. Enterprise buyers weighing multi-year API contracts now have a counter-offer that did not exist last week. The preview caps production commitments today. Developers can still benchmark V4 against their own workloads while DeepSeek iterates. For closed vendors, that creates a pricing reference point for the next contract cycle.

02Google's full-stack week: $40B to Anthropic, agentic-era TPUs, a new training algorithm

Bloomberg and TechCrunch reported within hours of each other that Google will invest up to $40 billion in Anthropic, structured as a mix of cash and compute credits. The arrangement returns a portion of the investment to Google as Cloud revenue when Anthropic spends the credits.

Two days earlier Google described its eighth-generation TPU as two chips built around agent workloads. Long-horizon inference and tool-calling patterns differ from the single-pass serving that earlier TPU generations targeted. Earlier in the same week, DeepMind published Decoupled DiLoCo, a distributed training algorithm focused on resilience across geographically separated clusters.

Each announcement landed at a different layer of the AI stack: the customer relationship, the chip, and the training algorithm. Capital decides which lab runs at scale on whose hardware. Per-token economics depend on silicon choice. The size of a single training run hits a coordination ceiling before distributed algorithms catch up.

Competitors engage at one layer apiece. Microsoft has Azure and an OpenAI partnership without shipping frontier-scale training silicon. For Nvidia, the silicon is the product, not lab-formation financing. Meta builds and trains internally; its compute is not extended as a financing instrument. Among hyperscalers, only Amazon engages on more than one layer, holding both Anthropic exposure and Trainium silicon.

The cash-and-compute structure also changes what a fundraising round means for a frontier lab. Anthropic's earlier multi-billion-dollar arrangement with AWS used a similar mechanic. Two of the lab's largest backers now denominate part of their investment in their own hardware, locking pricing and roadmap commitments into the deal.

For developers building on Anthropic models, the second-order effect is supply. The agentic-era TPUs add a non-Nvidia option for inference workloads while GPU allocation remains the bottleneck. Tool-heavy agent applications fan out to dozens of model calls per user request, putting pricing closer to silicon cost than chatbot-style turn-taking.

03The Verge named it 'software brain.' Hacker News flagged 'design slop.' World Press Photo defined what a photo is.

On The Verge's Decoder podcast this week, host Nilay Patel gave a name to a worldview he's been hearing from AI optimists. He calls it "software brain": a view that fits every problem into algorithms, databases, and loops. The episode brought on developer Simon Willison to argue, per its title, that the people do not yearn for automation.

The same week, two other professional communities drew lines of their own.

A blog post on adriankrebs.ch proposed scoring Show HN submissions for the visual patterns the author calls "design slop." It landed on Hacker News with 330 points and 233 comments, mostly developers comparing the fingerprints they now reflexively associate with AI-assisted projects. For founders launching on Show HN, that creates a new evaluation criterion alongside problem fit and execution: whether the design reads as templated.

World Press Photo took a different route. Its 2026 contest awarded the top prize to "Separated by ICE," a photojournalism entry. The Verge framed the choice as the contest's answer to "what is a photo" in an era of generative tools: documented reality, captured by a person.

Each line came from inside the relevant community, not from regulators or vendors. Decoder gave the worldview a name. On Hacker News, a developer post turned the visual fingerprint into a score. World Press Photo answered the question through what it awarded.

The timing is coincidence. Together the moves tell a different story. Two years of AI optimism told these communities they would be reshaped from the outside. The pushback is now coming from inside, in the form of definitions the community itself has set.

That changes the calculus for AI products. Outside opposition can be reframed as misunderstanding the technology. When the line comes from the people AI is pitched to replace or augment, that dismissal stops working.

Meta locks up millions of Amazon's homegrown CPUs for AI agents Meta signed a multi-year deal for millions of Amazon's in-house CPUs to run agentic AI workloads. The buy targets CPU inference for agent orchestration rather than the GPU compute used to train frontier models. techcrunch.com

Tim Cook plans September exit; hardware chief Ternus takes Apple CEO role Cook will step down in September after more than a decade leading Apple, handing the role to John Ternus. Ternus takes over with the App Store's 30% commission under regulatory pressure. techcrunch.com

Musk-Altman fraud trial opens Monday in Oakland A jury trial begins April 27 over whether OpenAI defrauded Elon Musk during its for-profit conversion. Musk co-founded OpenAI in 2015, left in 2018, and now runs rival xAI. theverge.com

US accuses China of "industrial-scale" AI theft, weighs sanctions The Trump administration alleged China stole US AI weights and training data at industrial scale, with sanctions reportedly on the table ahead of the Trump-Xi summit. Beijing called the charge slander. arstechnica.com

Samsung's smartphone arm faces first-ever annual loss as AI drains DRAM supply Internal forecasts show Samsung's mobile division could post its first yearly loss because the AI-driven memory shortage is pulling DRAM toward higher-paying data center buyers. The squeeze is hitting both component costs and finished-phone margins. arstechnica.com

Data center buildouts on track to emit more CO2 than entire nations Planned facilities from OpenAI, Meta, xAI, and Microsoft together could emit more than 129 million tons of greenhouse gases per year. The combined figure would exceed annual emissions of multiple mid-sized countries. arstechnica.com

Anthropic and NEC partner on Japan's largest AI engineering workforce The two companies announced a joint program to build what they describe as Japan's largest AI engineering team. The collaboration targets Claude deployment across NEC's enterprise customer base. anthropic.com

Project Maven cut Iran-strike targeting to nearly twice Iraq War pace US forces hit more than 1,000 targets in the first 24 hours of an assault on Iran, nearly double the volume of 2003's "shock and awe" campaign. The acceleration came from AI targeting systems led by the Maven Smart System. theverge.com

ComfyUI raises $30M at $500M valuation ComfyUI, the node-based interface giving creators granular control over AI image, video, and audio generation, closed a $30 million round. The valuation reflects demand for fine-grained control over generative pipelines. techcrunch.com

Mac minis flip on eBay as Apple stock dries up under local-AI demand Resellers are posting marked-up Mac mini listings after Apple ran out of stock. Buyers favor the compact desktop as a cheap host for running local LLMs and on-device AI workflows. techcrunch.com

Man faces 5 years for AI-generated wolf sighting hoax Authorities arrested a man for posting AI-generated images of a runaway zoo wolf "for fun" during an active search. The wolf had escaped by burrowing out of its enclosure and gripped national attention. arstechnica.com