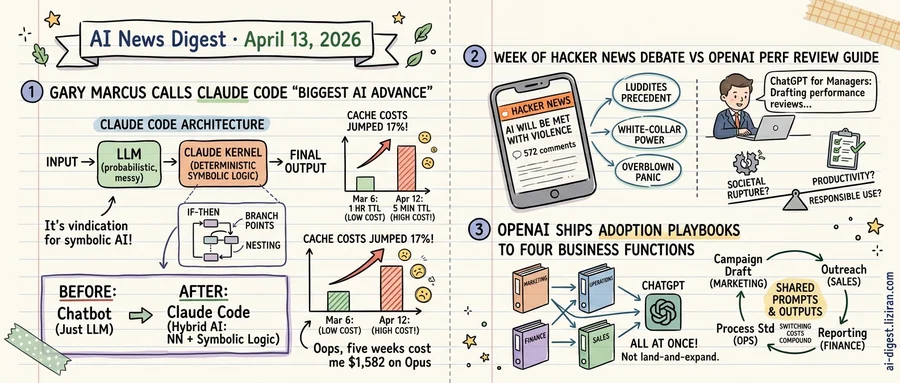

01Marcus Calls Claude Code the Biggest Advance Since LLMs. An Unannounced Cache Change Raised Bills 17%.

Gary Marcus has spent a decade telling the AI industry it's wrong. He debated Yoshua Bengio in 2019. His 2001 book The Algebraic Mind argued pure neural networks would hit a wall. When every lab announced breakthroughs, Marcus published rebuttals. So when he called Claude Code "the single biggest advance in AI since the LLM," the endorsement carried unusual weight.

Marcus's argument is structural, not promotional. He points to leaked source code: a file called print.ts containing 3,167 lines, 486 branch points, and 12 levels of nesting inside a deterministic symbolic loop. Claude Code, he writes, is "NOT a pure LLM. And it's not pure deep learning. Not even close." In his telling, this is the neurosymbolic architecture he's advocated for decades, finally arriving through Anthropic's engineering. He still calls it far from perfect. But the concession from a persistent critic registered as signal.

The cost side of the equation was documented on GitHub.

A developer analyzed 119,866 API calls across two machines and found that on March 6, Anthropic shifted Claude Code's default cache TTL from one hour to five minutes. Cache write operations that previously persisted for an hour now expired twelve times faster, forcing repeated rewrites. Across the analyzed period, Sonnet users overpaid by $949 and Opus users by $1,582. Both models showed 17.1% cost increases. Cache writes cost 12.5 times more than cache reads, so shorter TTLs hit heavy users hardest.

Anthropic's Jarred Sumner responded that the change was intentional. The client picks TTL per request based on expected reuse patterns, he said. A blanket one-hour TTL would cost more because one-hour writes carry higher per-token rates. The issue was closed as NOT_PLANNED. It collected 171 thumbs-up reactions.

Developers pushed back. One reported needing to compact sessions every time they paused coding for five minutes. Another calculated the frequency math: "A 1h → 5min cache TTL change means cache_create operations happen 12x more frequently for the same session." The phrase that kept recurring in the thread: "silent downgrades erode trust."

Every agentic coding tool faces the same arithmetic. The capability Marcus describes is real. But agentic workflows consume tokens at rates that make traditional API pricing volatile. A developer running Claude Code through a long debugging session generates billions of cache-read tokens. Whether any usage-based pricing model can sustain that intensity without surprising developers on their invoices is the open question for the entire category.

02An Essay Predicting Anti-AI Violence Drew 572 Developer Comments, and Most Took It Seriously

Hacker News is a site where compiler optimizations and database benchmarks dominate. This week, one of its most-discussed threads was an essay titled "AI Will Be Met with Violence, and Nothing Good Will Come of It." The post collected 324 upvotes and 572 comments, rare numbers for anything that isn't a product launch or open-source release.

Published on The Algorithmic Bridge, the essay argues that public backlash against AI will eventually turn physical. Not because people are irrational, but because displacement at scale produces predictable social friction. The comment section didn't dismiss the premise. Engineers, founders, and developers engaged seriously, many sharing unease about deployment pace and the distance between what AI companies promise and what workers experience.

The signal isn't the prediction itself. Warnings about AI backlash appear regularly in mainstream outlets. What set this apart was the audience. Hacker News skews toward builders: people who ship AI tools, integrate models into products, fine-tune on weekends. When this cohort treats a prediction of anti-AI violence as worth serious debate, it carries different weight than an op-ed in a general-interest publication.

The same week, OpenAI published "Responsible and Safe Use of AI," a guide covering best practices for accuracy, transparency, and safe prompting. Its framing is individual: how you should use AI tools well. Nothing in it addresses workforce displacement, concentrated economic effects, or the structural anxieties that animated the thread.

That disconnect is what the discussion surfaced. OpenAI's guide isn't wrong. Responsible individual use matters. But the developer conversation wasn't about prompt hygiene or hallucination rates. It was about what happens when automation outpaces job creation, and whether anyone building AI has a plan beyond telling users to be careful.

No consensus emerged. But a thread full of engineers taking the premise of anti-AI violence seriously is a signal the industry's public-facing risk frameworks have not yet addressed.

03OpenAI Organizes Its Academy by Corporate Department, Not AI Capability

Four new courses launched on OpenAI's Academy on the same day: one for marketing, one for operations, one for finance, one for customer success. None teach what ChatGPT can do in general. Each teaches a specific department how to rebuild its existing workflows around ChatGPT.

The organizing principle reveals more than the content. Those four functions are the classic buying centers in enterprise SaaS sales. Salesforce built Trailhead around this exact structure over a decade ago, and HubSpot Academy followed the same model. The playbook: don't sell to IT. Train individual departments until the tool is too embedded to remove.

Each course maps to daily departmental work, not abstract AI concepts. Marketing covers campaign planning and performance analysis; ops targets process standardization; finance focuses on reporting and forecasting; customer success addresses churn reduction and renewals. The framing is "redo your current job in ChatGPT," not "learn what AI can do."

That framing produces a specific kind of lock-in. When a marketing manager builds campaign briefs in ChatGPT and a finance analyst pulls forecasts there, each develops product-specific habits. The switching cost stops being technical and becomes organizational. Retraining four departments on a competitor's interface costs more than any subscription difference. The barrier is behavioral, not contractual.

These four courses launched alongside more than 20 other Academy offerings covering data analysis, software engineering, HR, and other functions. OpenAI is not running a one-off experiment. It is building a scaled education ecosystem to make ChatGPT the default tool inside entire organizations. The department-specific structure creates internal champions: employees whose daily productivity depends on the tool they trained on.

For Anthropic and Google, the competitive surface changes. Matching model capabilities alone is one problem. Dislodging an installed base of department-trained users is a harder one. When four teams inside a company learn to do their jobs in one AI tool, procurement defaults to renewal. The company that trains the workforce defines the workflow.

Stalking Victim Sues OpenAI, Alleges ChatGPT Reinforced Abuser's Behavior A woman filed suit against OpenAI claiming ChatGPT amplified her stalker's delusional fixation. The lawsuit alleges OpenAI ignored three warnings about the user, including its own internal mass-casualty flag, and took no action to restrict the account. techcrunch.com

Trump Officials Reportedly Pushing Banks to Pilot Anthropic's Mythos Senior administration figures have encouraged major banks to test Anthropic's Mythos model, per TechCrunch. The Department of Defense recently classified Anthropic as a supply-chain risk. techcrunch.com

Anthropic Temporarily Banned OpenClaw's Creator from Claude Access Anthropic suspended the developer behind OpenClaw, the open-source Claude client, after Claude's pricing changed for OpenClaw users last week. Details on the ban's duration and specific trigger remain undisclosed. techcrunch.com

Apple Testing Four Hardware Designs for Consumer Smart Glasses Apple is evaluating four distinct form factors for smart glasses, per TechCrunch. The project is a step back from earlier plans for a full lineup of mixed and augmented reality wearables beyond the Vision Pro. techcrunch.com

Leaked "SteamGPT" Files Suggest Valve Building AI Moderation System Internal Valve files reference a system called "SteamGPT" designed to help moderators screen suspicious activity on Steam. The tool targets security review and content moderation rather than user-facing features. arstechnica.com

Anthropic Drew the Most Attention at San Francisco's HumanX Conference Anthropic was the dominant presence at HumanX, San Francisco's AI-focused conference, per TechCrunch. Claude drew the most discussion across panels and hallway conversations. techcrunch.com

Gallup: Gen Z Growing Skeptical of AI but Still Using It Daily A Gallup survey of nearly 1,600 Americans aged 14 to 29 found declining enthusiasm for AI among Gen Z. Usage in school and work remains steady despite the attitude shift. theverge.com

AI Models Fail at Premier League Match Predictions, Grok Performs Worst Ars Technica tested soccer prediction accuracy across models from Google, OpenAI, Anthropic, and xAI. All performed poorly; xAI's Grok ranked last. arstechnica.com