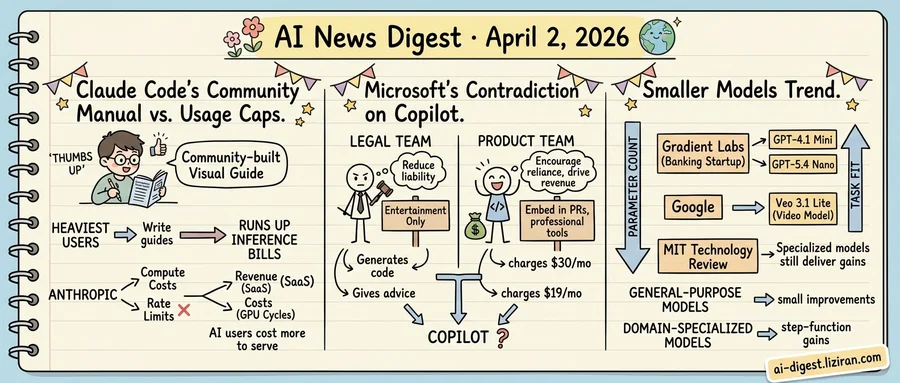

01Developers Built Claude Code's Missing Manual the Same Week They Hit Its Rate Limits

Someone outside Anthropic published a visual guide to Claude Code, and it resonated instantly. "Claude Code Unpacked," a visual walkthrough of the tool's internals and workflows, collected over 1,000 upvotes on Hacker News last week, one of the platform's top developer posts for March. The discussion thread read less like product feedback and more like a user community organizing around a tool its maker hadn't fully documented.

Developers don't typically build polished educational resources for commercial software they pay to use. That's the vendor's job. The Unpacked guide isn't a casual blog post. It's a structured reference, the kind of resource you'd expect from a vendor's developer relations team. But Claude Code's heaviest users have taken on that role themselves. They share prompts, trade configuration files, and now write each other's onboarding materials.

The same week the guide went viral, a different story landed. The Register reported that Claude Code subscribers were hitting usage caps "way faster than expected." Paying users described mid-session cutoffs during active coding work, according to complaints compiled in the report. For a tool built around extended agentic sessions, where Claude writes, tests, and iterates code autonomously, an interruption doesn't degrade performance gradually. It kills the session.

The two stories arrived days apart, but they describe the same bind. Users most invested in Claude Code are the ones consuming the most compute. Traditional SaaS companies celebrate that kind of engagement: more usage means more lock-in at near-zero marginal cost. AI tools don't work that way. Every agentic coding session burns GPU cycles Anthropic pays for in real dollars. A user running Claude Code for an hour of autonomous debugging costs far more to serve than one sending occasional chat queries. The users building the community are also running up the largest inference bills.

Anthropic has acknowledged the demand pressure. The company's subscriber base reportedly doubled in recent months, compounding the compute load from longer, more autonomous sessions. Rate limits remain the blunt instrument for managing capacity when supply can't match adoption.

The community hasn't turned hostile yet. Forum tone leaned toward frustration, not abandonment. But users who invest time building a tool's ecosystem expect reliability in return. When sessions cut off mid-task, that expectation erodes.

02Microsoft Calls Copilot "Entertainment Only" While Pushing It Into Code Review

Microsoft's terms of use for Copilot include a clause that would surprise most paying customers: the AI assistant is provided "for entertainment purposes only." The language sits in the individual terms of service, unqualified and unhidden. Under this framing, nothing Copilot produces should be relied upon for professional work.

The same month, GitHub — Microsoft's $7.5 billion subsidiary — pulled back a feature that placed Copilot promotional content directly into pull request pages. Those ads sat in the code review interface, where engineers evaluate changes before they reach production. GitHub removed them after developer backlash. But the impulse revealed the product team's direction: embed Copilot deeper into the workflows where developers make their most consequential decisions.

These moves originate from different divisions solving opposite problems. The legal team needs Copilot's liability surface as small as possible. "Entertainment only" is the sharpest version of that instinct. If the product hallucinates, generates vulnerable code, or provides harmful advice, the disclaimer puts the risk on the user. Courts have generally treated entertainment classifications as strong shields against negligence claims.

The product team operates under a different logic. Copilot's commercial value depends on developers treating it as a real tool in real workflows. Microsoft charges $30 per month for Copilot Pro. GitHub Copilot costs $19 per month for individual developers. Enterprise tiers run higher. Revenue growth requires deeper integration, not less. Placing ads inside pull requests treated Copilot as something developers should want at the moment of highest professional stakes.

One group writes language designed to prevent reliance. The other builds features designed to encourage it. Both report to the same CEO.

The "entertainment" label applies to every output. It draws no distinction between a generated limerick and a generated code refactor. Under the current terms, both carry the same legal classification.

That gap holds as long as no one sues. The first product liability claim will force Microsoft to argue that Copilot is either a professional tool worth its subscription price or a toy its users should never have trusted.

03AI Vendors Ship Smaller Models as the 10x Scaling Era Ends

A banking startup picked mini models over flagships. Google launched a video model it calls "Lite" without qualifier. MIT Technology Review declared the age of massive capability jumps finished. Three uncoordinated signals, one direction.

Gradient Labs, which builds AI account managers for banks, chose GPT-4.1 and GPT-5.4 mini and nano for its production stack. Not the flagship. The startup optimized for low latency and instruction-following consistency, according to an OpenAI case study. Its agents handle full customer support workflows at scale. For that job, a model that responds fast and follows rules reliably beats one built to solve PhD-level math.

Google released Veo 3.1 Lite through the Gemini API in paid preview, positioning it as the company's "most cost-effective video generation model." The naming tells you where product strategy has moved. "Lite" is the offering, not the compromise.

MIT Technology Review published an analysis framing the shift in structural terms. General-purpose models now improve in increments, the piece argues, while domain-specialized models still deliver step-function gains. It calls customization "an architectural imperative": fusing a model with proprietary organizational data produces the kind of leaps that frontier scaling no longer reliably delivers.

These signals come from different market layers. A startup chose its deployment stack, a platform shaped its product line, and a publication reframed the technical consensus. Yet they trace one structural turn: value in AI is migrating from parameter count to task fit.

Model providers now compete less on raw scale and more on pricing tiers and fine-tuning tooling. Customers gain negotiating power when "good enough" has its own SKU. Builders who spent two years waiting for the next frontier release are shipping production systems on smaller models that already work.

Gig Workers Strap iPhones to Their Heads to Train Humanoid Robots Robotics companies now recruit remote gig workers — including medical students in Nigeria — to record full-body motion data from home using smartphone cameras and ring lights. The footage feeds training datasets for humanoid robot locomotion and manipulation. technologyreview.com

Lingshu-Cell Models Cellular States With Masked Discrete Diffusion Researchers released Lingshu-Cell, a generative model that learns transcriptomic state distributions and simulates how cells respond to perturbations. The masked discrete diffusion approach goes beyond static single-cell representations to enable conditional generation of cellular states. The work targets virtual cell modeling, a longstanding goal in computational biology. huggingface.co

Project Imaging-X Catalogs 1,000+ Open-Access Medical Imaging Datasets A new survey maps over 1,000 publicly available medical imaging datasets suitable for training foundation models. The catalog addresses a key bottleneck: clinical expertise requirements and privacy constraints have kept medical imaging data fragmented. The resource aims to accelerate development of general-purpose medical AI models. huggingface.co

FIPO Replaces Uniform Token Rewards With Fine-Grained Credit Assignment for Reasoning A new RL algorithm called Future-KL Influenced Policy Optimization targets a known weakness in GRPO-style training: outcome-based rewards treat every token in a reasoning chain equally. FIPO distinguishes critical logical steps from filler tokens, assigning credit at a finer granularity. The authors argue this removes a performance ceiling on reasoning tasks. huggingface.co

TAPS Shows Draft Model Training Data Matters More Than Architecture for Speculative Decoding Researchers trained lightweight draft models on domain-specific data (math, chat, mixed) and measured acceptance rates against larger target models. Task-matched training distributions consistently outperformed generic drafters. The finding suggests speculative decoding gains depend heavily on draft-target distribution alignment, not just model size. huggingface.co

EpochX Proposes a Credits-Based Marketplace for Human-Agent Work Delegation A new paper introduces EpochX, an infrastructure layer where tasks flow between humans and AI agents through a credits-native marketplace. The system handles delegation, verification, and payment at scale. The design assumes the bottleneck for AI agents has shifted from raw capability to coordination and accountability. huggingface.co

CARLA-Air Adds Drone Dynamics to the CARLA Driving Simulator A new extension unifies aerial and ground agent simulation in a single physics-consistent environment. Existing open-source platforms separate driving and multirotor simulation, forcing brittle co-simulation bridges. CARLA-Air supports joint air-ground scenarios for embodied intelligence research. huggingface.co

LongCat-Next Converts All Modalities to Discrete Tokens for Unified Autoregressive Generation The Discrete Native Autoregressive (DiNA) framework represents text, images, and other modalities within a shared discrete vocabulary. The approach eliminates the adapter-based architectures that treat non-text modalities as bolt-on modules. The model handles multimodal input and output through a single next-token prediction loop. huggingface.co

VGGRPO Uses 4D Latent Rewards to Fix Geometric Inconsistency in Generated Video Video diffusion models produce high-quality frames but frequently break geometric consistency across time. VGGRPO applies reinforcement learning with a 4D latent reward signal, avoiding the cost of repeated VAE decoding in RGB space. The method works on dynamic scenes, where prior geometry-aware alignment was limited to static content. huggingface.co

GEditBench v2 Provides 1,200 Real-World Tests for Image Editing Models A new benchmark addresses gaps in how image editing models are evaluated: narrow task coverage and metrics that miss visual consistency. GEditBench v2 includes 1,200 real-world use cases and measures preservation of identity, structure, and semantic coherence between original and edited images. huggingface.co