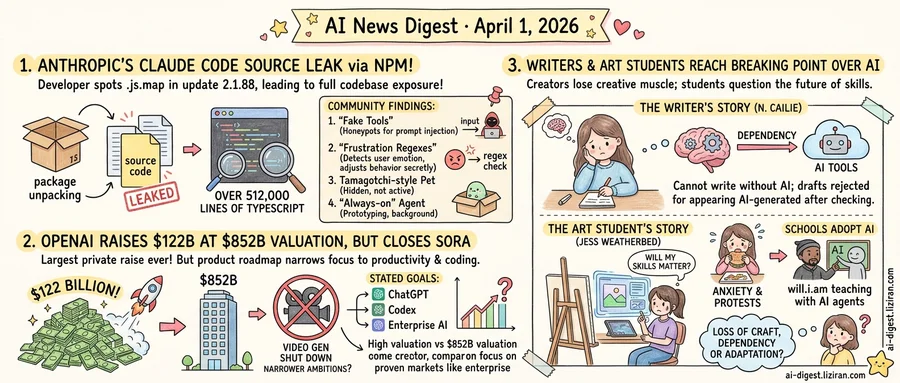

01Anthropic Shipped Claude Code's Full Source in an NPM Update

A developer on X spotted it first: a .js.map file sitting inside Claude Code's version 2.1.88 NPM package. Source maps are debugging aids that link compiled JavaScript back to its original TypeScript. They are not supposed to ship in production. This one contained the entire codebase. Within hours, the file had been extracted and posted publicly. It held more than 512,000 lines of TypeScript, an order of magnitude beyond what most command-line tools ship.

The initial Hacker News thread collected 1,839 points and 898 comments. A second analysis post drew 570 points and 217 more. Developers were not just reading the code. They catalogued what Anthropic had built and never disclosed.

The first finding was a set of decoy endpoints the community called "fake tools." These appear to be honeypots for prompt injection attempts. When an attack tries to invoke a function that shouldn't exist, the decoy intercepts the call. Some developers called it clever defense engineering. Others saw it as a system that treats its own users as potential adversaries by default.

Then came what one analysis termed "frustration regexes." The source contains regular expressions that match patterns in user messages indicating irritation or confusion. When triggered, Claude Code can adjust its behavior in response. The tool monitors emotional signals in real time and modifies its output without telling the user it has done so.

The Verge reported two additional findings. Buried in the codebase was a Tamagotchi-style virtual pet that tracks persistent state across sessions. References to an always-on background agent suggest Anthropic has been prototyping a product that runs continuously. The current version responds only when a developer types a command. Neither feature is user-facing yet.

An "undercover mode" also surfaced in the source code. Its exact function remains unclear from the decompiled files, and several developers offered conflicting interpretations. Anthropic has not commented on the leak or any of the findings.

The Hacker News discussion reflected a split. Developers acknowledged sophisticated engineering in the frustration-detection system. But the same commenters pointed out that none of these mechanisms appeared in Anthropic's documentation. Users typing commands into Claude Code had no indication the tool was reading their emotional signals and adjusting its behavior accordingly.

02OpenAI Raises $122 Billion at $852 Billion Valuation as Product Ambitions Narrow

OpenAI closed a $122 billion funding round on March 31, the largest private raise on record, setting its valuation at $852 billion. That figure surpasses the market capitalization of all but a handful of public technology companies. It prices OpenAI as a generational platform: one that will reshape how software gets built, distributed, and consumed.

The company's own announcement frames the money differently. OpenAI's blog post says the capital will fund "expanding frontier AI globally" and "next-generation compute." When it names products, it lists three: ChatGPT, Codex, and enterprise AI. Creative tools go unmentioned. This from a company that, earlier this quarter, shut down its video generator Sora and lost its partnership with Disney.

An $852 billion valuation implies investors expect OpenAI to capture value across consumer, creative, developer, and enterprise markets simultaneously. The product roadmap points narrower: a productivity and coding assistant backed by an enterprise sales motion. That is a viable business. It is not obviously an $852 billion one.

The gap registered publicly. Among 231 comments on the CNBC report on Hacker News, recurring threads questioned whether investors are pricing in products OpenAI has already killed. Several noted the contrast between the round's size and the quarter's product cuts.

The defense is straightforward. ChatGPT has the largest user base of any AI product, according to OpenAI. Codex and enterprise offerings are the company's stated growth priorities. Cutting Sora could represent discipline: concentrating capital where revenue exists rather than subsidizing speculative markets. Focus, in this reading, is a feature.

That logic sits awkwardly with the valuation math. Companies that narrow their product lines to proven revenue streams typically trade at lower multiples than those with expansion optionality priced in. Investors paid the platform premium. The roadmap they received describes a productivity suite.

The $122 billion will fund infrastructure and global expansion. What it will not fund, based on OpenAI's own words, is the breadth of ambition that $852 billion implies.

03Writers and Art Students Reach the Same Breaking Point Over AI

A multilingual blogger confesses they can no longer write a thousand words without reaching for an AI tool. Across American campuses, 3D modeling students wonder whether the skills they're acquiring will matter by graduation. The complaints surfaced independently. They describe the same structural shift.

N. Cailie, who writes in English as their fourth language, posted on LessWrong that before 2023, their first drafts rarely needed revision. Now they cannot compose a slam poem, an email, or a think piece without consulting a language model. Their LessWrong submission was initially rejected for appearing AI-generated, despite being 80 percent their own writing: they had run it through an LLM for grammar checks. "I have now trained my brain to rely on these automated tools that it cannot be creative anymore," they wrote. The post drew 312 points and 235 comments on Hacker News.

Verge reporter Jess Weatherbed described watching her brother, a 3D modeling and animation student, train for jobs that may not exist when he graduates. The anxiety is institutional. A Ringling College of Art and Design survey in late 2023 found 70 percent of students felt negative toward AI; most said they didn't want it in the curriculum. CalArts students defaced posters requesting AI help for thesis projects. A film student at the University of Alaska Fairbanks ate another student's allegedly AI-generated display piece in protest.

Schools are folding AI literacy into their programs regardless. CalArts, MassArt, the Royal College of Art, and Pratt Institute all now push students to explore generative AI. Arizona State announced a Spring 2026 course called "The Agentic Self," taught by will.i.am, where students build personal AI agents. "Our graduates must be ready for the powerful shift in jobs toward AI," ASU President Michael Crow said.

The two signals point to a single cause. Once AI output crossed the "good enough" bar for text and images, two things buckled. Working creators lost creative muscle through dependency. Students lost the economic case for learning the craft.

Police Wrongly Arrested Tennessee Woman After AI Facial Recognition Match Police in North Dakota used AI facial recognition to identify Angela Lipps, a Tennessee resident, as a suspect. She was arrested despite having no connection to the state or the alleged crimes. cnn.com

Google Releases Veo 3.1 Lite, Its Cheapest Video Generation Model Veo 3.1 Lite is now in paid preview through the Gemini API and available for testing in Google AI Studio. Google positions it as its most cost-effective option for developers building video generation into apps. blog.google

MIT Technology Review Calls for Replacing Human-vs-AI Benchmarks AI benchmarks have long measured whether models outperform humans on isolated tasks like chess, math, or coding. That framing fails as models move into open-ended, real-world work where no single-human baseline applies. The piece argues evaluation must shift to deployment-specific metrics. technologyreview.com

ChatGPT Now Runs on Apple CarPlay Dashboards Apple's iOS 26.4 update added support for voice-based conversational apps in CarPlay. OpenAI shipped a ChatGPT integration immediately, making it the first AI chatbot accessible from the car dashboard. theverge.com

Hugging Face Paper Proposes First Autonomous AI System for Clinical Research Medical AI Scientist generates clinical hypotheses, designs experiments, and drafts manuscripts. Existing autonomous research agents lack the medical domain grounding and specialized data pipelines this framework provides. huggingface.co

Samsung Galaxy S26 Ships Open-Ended AI Photo Editing Samsung's default photo app now accepts natural-language requests to alter images. The feature extends beyond background tweaks into full scene manipulation, following Google's Pixel 9 AI editing tools. theverge.com

Study Catalogs Failure Modes When Multiple AI Agents Negotiate Together Multi-agent systems built on large generative models produce risks that no single agent would exhibit alone. The paper identifies emergent failures in collective planning, resource allocation, and negotiation at scale. huggingface.co

MIT Technology Review: Domain-Specific AI Models Now Deliver Bigger Gains Than General Upgrades General-purpose LLM improvements have flattened to incremental jumps. Models fused with organization-specific data still produce large capability leaps in specialized tasks. technologyreview.com