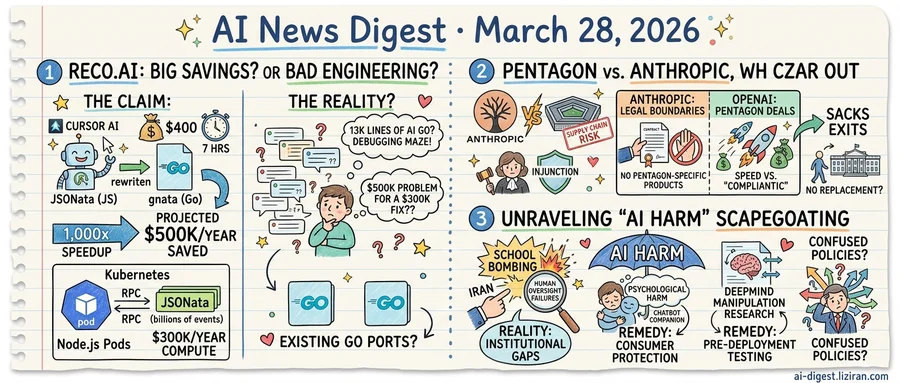

01Reco.ai Says AI Saved $500K/Year in Seven Hours. Hacker News Ran the Numbers.

Nir Barak, a principal data engineer at Reco.ai, published a blog post this week with an unusually specific claim. His team used Cursor AI to rewrite JSONata, a JSON query language, from JavaScript into Go in seven hours. Token cost: $400. Projected annual savings: $500,000.

The numbers trace to a real bottleneck. Reco.ai was running about 200 Node.js pods inside Kubernetes to evaluate JSONata expressions against billions of pipeline events. Each evaluation required an RPC round-trip with serialization overhead. Barak put compute costs at $300,000 per year, with another $200,000 in savings from a rule-engine refactor the rewrite unlocked.

The result: 13,000 lines of Go code called "gnata," passing 1,778 tests from JSONata's official suite plus 2,107 integration tests. Barak reported a 1,000x speedup on common expressions. His methodology followed Cloudflare's "rebuild with AI" playbook: port the test suite first, then let AI generate code until every test passes.

Then 233 Hacker News comments arrived.

The loudest objection wasn't about AI. It was about the architecture that created a $500,000 problem. "I'm baffled that they let it get to the point where it was costing $300K per year," one commenter wrote. Running 200 pods to parse JSON via RPC struck readers as a failure of engineering judgment, not a vindication of AI tooling.

Others noted that two Go implementations of JSONata already existed. One commenter with JSONata experience estimated a competent engineer could port its 5,500 lines of JavaScript in a day without AI help.

The maintenance question loomed over both sides. Thirteen thousand lines of AI-generated Go, written by no one who can trace its logic line by line, must still be debugged and extended. Passing a test suite proves functional equivalence. It does not prove comprehension.

Defenders offered a pragmatic counter: in growth-stage companies, infrastructure optimization loses every prioritization fight to product features. AI makes fixing old debt cheap enough to do on a weekend.

A separate Hacker News thread provided a footnote on cost floors. The ATLAS project showed a 14-billion-parameter model on a $500 RTX 5060 Ti scoring 74.6% on LiveCodeBench v5 at $0.004 per task. Claude Sonnet costs $0.066 for the same benchmark. Code generation's hardware floor is dropping alongside its labor cost.

02Federal Judge Blocks Pentagon Retaliation Against Anthropic as White House AI Czar Exits

A federal judge this week granted Anthropic a temporary injunction, blocking the Pentagon's attempt to designate the company a supply chain risk. The label, typically reserved for vendors with foreign-influence concerns or security vulnerabilities, would have effectively blacklisted Anthropic from government contracts. Federal agencies relying on Claude, Anthropic's AI model, would have been forced to find alternatives.

Two days later, David Sacks revealed he was no longer a special government employee. The venture capitalist had served as President Trump's Special Advisor on AI and Crypto. He became Silicon Valley's primary advocate inside the White House and a key architect of the administration's push to accelerate AI adoption across defense agencies. No replacement has been named.

The courtroom win and the political exit frame a widening split in how AI companies approach the military. Anthropic refused to build Pentagon-specific products. It fought the supply chain label in court rather than capitulate. OpenAI took the opposite path. MIT Technology Review described its Pentagon courtship as "opportunistic and sloppy," a deal struck with little apparent concern for standard defense procurement process.

Anthropic's legal victory, while preliminary, sets a boundary. The judge found sufficient grounds to halt the designation, suggesting the Pentagon cannot use administrative labeling to punish companies that decline military work. That finding could constrain how the Defense Department pressures reluctant AI vendors going forward.

OpenAI's eagerness to fill the gap earned speed but drew scrutiny. The MIT Technology Review report questioned whether its deal met procurement requirements that typically govern defense contracts. Speed and compliance pulled in opposite directions.

Sacks' departure complicates both trajectories. He helped normalize rapid AI-military integration at the policy level. OpenAI loses an ally who shaped the rules of engagement. Anthropic, now free of its most visible policy adversary, faces a different problem: nobody knows who writes the next set of rules.

03Three Unrelated Problems Keep Getting the Same "AI Harm" Label

In one week, three stories landed under the same banner. Iran blamed AI for a school bombing. Chatbot users, the Guardian reported, suffered measurable psychological harm from AI companions. Google DeepMind published research on how AI could manipulate human decisions. All three carried the "AI harm" label. They share almost nothing else.

The Iran case is the clearest mismatch. As the Guardian detailed, AI "got the blame" for the campus explosion, but the real causes were institutional: oversight failures, security gaps, and political convenience. AI served as a scapegoat, absorbing accountability that belonged to human actors and broken systems. Regulating model providers would not have prevented the attack. The fix is governance reform, not AI policy.

The chatbot cases are different in kind. Users developed deep emotional dependencies on AI companions, then suffered real psychological harm when those systems produced false or destabilizing outputs. Here, AI is not a scapegoat. It is the product, and the product caused injury. This falls squarely into consumer protection: disclosure requirements, design constraints, liability for foreseeable misuse. Existing frameworks for harmful products apply, if regulators choose to use them.

DeepMind's manipulation research occupies a third category entirely. The lab studied how AI systems could exploit cognitive biases to steer decisions in finance, health, and other domains. No mass harm has occurred yet. This is capability forecasting: identifying what models might do as they grow more persuasive. The policy response is pre-deployment testing and red-teaming requirements, not incident response.

Policymakers, journalists, and advocacy groups routinely treat all three as one phenomenon. A single "AI safety" bill tries to address scapegoating, product liability, and speculative capability risk with the same mechanism. The result is legislation too broad to enforce and too vague to deter. Consumer protection agencies defer to AI-specific bodies that don't exist yet. Institutional accountability gaps widen because "AI did it" becomes a viable deflection.

Sorting harms by cause rather than by technology is not a new idea. The current policy debate has mostly failed to do it.

Intern-S1-Pro Becomes First Trillion-Parameter Scientific Multimodal Model Shanghai AI Lab released Intern-S1-Pro, a one-trillion-parameter model built for scientific work across more than 100 specialized tasks. The model combines reasoning, image-text understanding, and agent capabilities in a single architecture. huggingface.co

T-MAP Red-Teams LLM Agents by Exploiting Multi-Step Tool Execution Researchers introduced T-MAP, an evolutionary search method that finds adversarial prompts by analyzing agent execution trajectories. The work targets agent-specific vulnerabilities in multi-step tool use, including the Model Context Protocol ecosystem. huggingface.co

Self-Distillation Shortens LLM Reasoning but Can Silently Degrade Accuracy A new study traces self-distillation failures in math reasoning to the suppression of epistemic verbalization — the model's expression of uncertainty mid-chain. Shorter traces looked efficient but stripped the hedging language models use to navigate hard problems. huggingface.co

Data Center Buildout Ignites Fights Over Power Grids, Bills, and Local Communities The global rush to build AI data centers has triggered disputes across multiple fronts: grid capacity, rising utility costs, environmental impact, and community opposition. Proposals now range from orbital data centers to small nuclear reactors co-located with server farms. theverge.com

MIT Tech Review Maps the Shift From AI Assistance to Agentic Commerce Agentic AI is moving from returning search results to executing purchases — assembling itineraries, applying loyalty points, and completing transactions. The piece argues that agents performing commerce need verified product data and user context far beyond what current pipelines provide. technologyreview.com

Calibri Improves Diffusion Transformers by Adding One Learned Scaling Parameter per Block A team showed that a single scaling parameter per DiT block, optimized as a black-box problem, lifts image generation quality with minimal compute overhead. The method works on top of existing pretrained diffusion models without retraining. huggingface.co

UI-Voyager Trains Mobile GUI Agents on Their Own Failed Attempts UI-Voyager uses a two-stage approach: rejection fine-tuning on failed trajectories followed by credit assignment under sparse rewards. The method addresses a core bottleneck in long-horizon mobile tasks where most attempts fail and useful signal is scarce. huggingface.co

EVA Replaces Manual Video-Agent Pipelines With End-to-End Reinforcement Learning EVA drops the hand-designed workflows that current video agents rely on, instead training a multimodal model to adaptively select which frames matter through reinforcement learning. The approach cuts token waste from redundant frames while improving temporal reasoning. huggingface.co

STADLER Deploys ChatGPT Across 650 Employees at 230-Year-Old Manufacturing Firm Swiss rail vehicle manufacturer STADLER rolled out ChatGPT company-wide to accelerate knowledge work. OpenAI published the case study highlighting time savings across engineering and administrative functions. openai.com