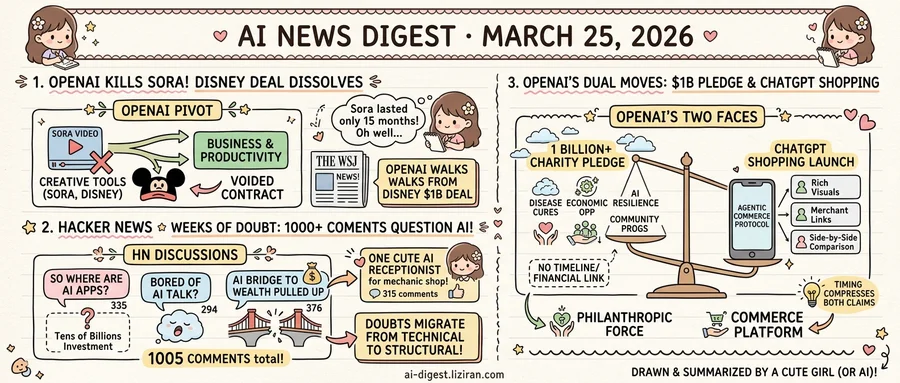

01OpenAI Kills Sora 15 Months After Launch, Unwinding a Billion-Dollar Disney Deal

Earlier this month, OpenAI published a detailed blog post titled "Creating with Sora Safely," describing how it had built Sora 2 "with safety at the foundation" and outlining concrete protections for long-term operation of a video generation platform. Days later, the company announced it was shutting Sora down entirely.

The video generator launched in late 2024 to widespread attention. OpenAI positioned it as a flagship creative tool, capable of producing high-fidelity video from text prompts. Within months, Disney signed a licensing deal valued at roughly $1 billion, according to The Wall Street Journal. That agreement made Sora one of the largest commercial bets any entertainment company had placed on generative AI.

On Tuesday, OpenAI posted a brief farewell: "We're saying goodbye to Sora." Sam Altman had already told staff that both the product and the Disney partnership were finished. The Wall Street Journal reported the decision earlier, framing it as part of a broader plan to refocus on business and productivity use cases.

Fifteen months is not long enough for most software products to reach general availability. Sora completed its entire lifecycle in that window: public debut, enterprise partnership, safety framework publication, and cancellation. The Disney deal alone would have justified years of continued development at most companies. OpenAI chose to walk away from it.

The safety blog post adds an odd coda. Its language assumes a future for Sora that no longer exists. OpenAI's researchers wrote about building trust with creators, iterating on content moderation, and designing for a platform that would grow over time. Either the team writing that post did not know the shutdown was coming, or the decision moved faster than the company's own communications could keep up.

The direction is clear. OpenAI is pulling resources away from creative generation tools and toward products that serve business customers. Sora was the most visible casualty, but the pattern extends beyond a single product. Altman's message to staff, as reported by The Verge, treated the shutdown not as a retreat but as a reallocation. The billion-dollar Disney deal was collateral damage in a pivot that had already been decided.

Disney now holds a voided contract for a product that no longer exists. OpenAI holds the conviction that enterprise productivity will pay better than Hollywood partnerships.

02Three Hacker News Threads Drew 1,005 Comments Questioning AI in a Single Week

Three posts reached the Hacker News front page in the same March week. Each cleared 250 comments. None announced a product, funding round, or benchmark. All three asked variations of one question: is any of this working?

"So where are all the AI apps?" collected 368 points and 335 comments. "Is anybody else bored of talking about AI?" topped it at 389 points with 294 comments. "The bridge to wealth is being pulled up with AI" drew the deepest discussion: 376 comments on 254 points. Over a thousand comments combined, none about what AI can do, all about whether the conversation has run its course.

Each post attacks from a different angle. One questions output: after tens of billions in investment, where are the applications ordinary users rely on daily? Another targets attention: the discourse around AI has grown so repetitive that engaged developers are opting out. The third questions distribution: if AI generates wealth, returns concentrate among those with existing capital and compute access.

These aren't random gripes surfacing in isolation. They track a progression. Developers who spent 2024 debating model quality now ask "who benefits?" and "why are we still talking about this?" Skepticism has migrated from technical capability to structural legitimacy.

The counterpoint arrived the same week. One developer built an AI receptionist for her brother's mechanic shop: answering phones, booking appointments, handling Spanish-speaking customers. Her post earned 306 points and 315 comments. Foundation model breakthroughs played no role. She solved a family member's scheduling problem with available tools.

That post's traction completes the pattern. The AI application that developers can't find may look less like the next platform and more like a phone system for a garage. Community response matched the existential threads in intensity. Practical, small, mundane. Among the four viral posts, only the receptionist project wore those labels as a badge.

03OpenAI Pairs $1 Billion Charity Pledge with ChatGPT Shopping Launch

OpenAI's foundation committed at least $1 billion to curing diseases, expanding economic opportunity, strengthening AI resilience, and funding community programs. Days later, the company launched a visually immersive shopping experience inside ChatGPT, powered by what it calls the "Agentic Commerce Protocol." One announcement positions OpenAI as a philanthropic force. The other turns its chatbot into a product discovery platform with merchant integrations and side-by-side comparisons.

The billion-dollar figure comes with caveats. OpenAI says "at least" $1 billion but has not disclosed a timeline, specific funding mechanisms, or the foundation's financial relationship to the for-profit entity. The foundation predates OpenAI's corporate restructuring, yet its role has evolved alongside the company's shift toward profit. Four stated priorities span disease treatment, economic mobility, AI safety, and local community grants. Whether the pledge draws from operating revenue, future equity, or some other vehicle remains unstated.

ChatGPT users can now browse products, compare options visually, and connect with merchants inside the chat interface. Product listings appear as rich visual cards with pricing, ratings, and direct purchase links. The Agentic Commerce Protocol lets merchants integrate their inventory into conversations, suggesting infrastructure built for transactions, not just recommendations. That moves ChatGPT from a conversation tool toward a commerce platform. Subscription fees are predictable but flat; commerce commissions scale with volume.

These two moves aren't contradictory on their face. Companies routinely fund philanthropic arms with commercial profits. But the timing compresses both claims into a single week: OpenAI wants credit for fighting disease while building a storefront inside its most popular product. The foundation supplies social-responsibility credentials. Its shopping launch reveals where future revenue will come from.

OpenAI has not clarified whether commerce revenue would fund the foundation's work. Nor has it explained how merchant partnerships will be selected or what commission structures apply. The philanthropic commitment and the commercial expansion share a press cycle but, so far, no visible financial link.

LongCat-Flash-Prover Releases 560B Open-Source Model for Lean4 Formal Proofs The 560-billion-parameter mixture-of-experts model tackles formal mathematical reasoning in Lean4 by splitting the task into auto-formalization, sketching, and proving. A Hybrid-Experts Iteration Framework generates high-quality training trajectories for each capability. huggingface.co

OpenResearcher Builds Fully Offline Pipeline for Training Deep Research Agents The open-source pipeline decouples corpus bootstrapping from multi-turn trajectory synthesis, removing the need for proprietary web APIs during training. All search-and-browse loops run locally, making large-scale trajectory generation cheaper and reproducible. huggingface.co

daVinci-MagiHuman Open-Sources Single-Stream Audio-Video Generation Model The model generates synchronized video and audio through a single Transformer that processes text, video, and audio tokens in one unified sequence. The design drops cross-attention and multi-stream complexity in favor of standard self-attention, simplifying both training and inference. huggingface.co

TerraScope Adds Pixel-Level Grounding to Earth Observation Vision-Language Models The unified VLM handles both optical and synthetic aperture radar inputs and fuses modalities during reasoning. It grounds spatial analysis at pixel resolution, targeting tasks where coarse bounding boxes lose critical geographic detail. huggingface.co

Omni-WorldBench Proposes Interaction-Based Evaluation Standard for 4D World Models The benchmark argues that world modeling should jointly measure spatial structure and temporal evolution, not just visual fidelity or static 3D metrics. It covers both video generation and 3D reconstruction paradigms under a single evaluation framework. huggingface.co

HopChain Exposes Compounding Errors in Vision-Language Reasoning with Multi-Hop Data Long chain-of-thought reasoning in VLMs surfaces perception, reasoning, knowledge, and hallucination failures that compound across steps. HopChain synthesizes multi-hop training data where each step depends on visual evidence, forcing models to maintain grounding throughout. huggingface.co

ProactiveBench Tests Whether Multimodal Models Know When to Ask for Help The benchmark measures whether MLLMs can request simple user interventions — like removing an obstruction or improving image quality — instead of guessing from insufficient input. It repurposes seven existing datasets to test this "proactive" behavior across recognition, enhancement, and interpretation tasks. huggingface.co

F4Splat Cuts Redundant Gaussians in Feed-Forward 3D Reconstruction Feed-forward 3D Gaussian Splatting methods typically allocate Gaussians uniformly across views, wasting compute on redundant primitives. F4Splat uses predictive densification to control total Gaussian count while preserving reconstruction quality. huggingface.co