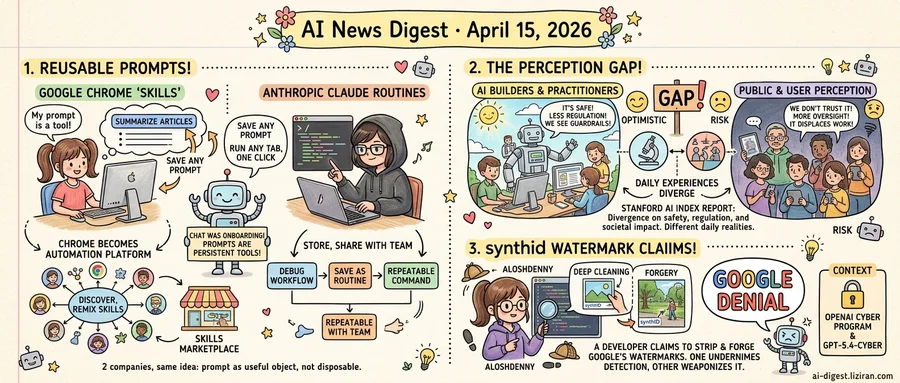

01Google and Anthropic Ship Near-Identical "Reusable Prompt" Features Days Apart

Google's Chrome browser now lets users save any Gemini prompt as a "Skill": a reusable command that runs across any open tab with one click. Save a prompt that summarizes articles into bullet points, and it becomes a permanent button in your browser. No coding required.

The feature launched this week on Chrome desktop. It turns the browser into something closer to an automation platform than a chat interface. Users can discover Skills others have built, remix them, and combine them into multi-step workflows. Think browser extensions, but written in plain English instead of JavaScript.

That alone would be a product update. What makes it a signal is timing.

The same week, Anthropic shipped Claude Code Routines. Developers can save prompt sequences as named, repeatable commands. Someone who types the same debugging workflow every morning can store it as a routine and trigger it with a slash command. Those prompts persist across sessions and can be shared across teams.

Two companies, two different products, two different user bases, one identical design decision: the prompt is no longer disposable. It's a tool you keep.

This convergence reflects a practical problem both platforms observed. Chat interfaces generate value on first use and almost none on the twentieth repetition of the same request. Users were already copying prompts into text files and building personal libraries in Notion. Both companies are now absorbing that behavior into the product itself.

Chrome's approach is the more ambitious bet on distribution. Skills can be shared and discovered through the browser, which means Google is building a marketplace layer on top of prompt reuse. Someone builds a useful workflow, publishes it, and thousands of users install it without understanding the underlying prompt engineering. That lowers the floor for building AI-powered tools to anyone who can write a sentence.

Anthropic's Routines target a narrower audience: developers working inside a terminal. But they fill an equivalent gap. Debugging scripts, code review checklists, and deployment checks follow predictable patterns. Routines let teams encode those patterns once and run them consistently.

Their shared bet is clear: chat was the onboarding format, not the productivity format. The next interface layer treats prompts as persistent, composable, shareable artifacts.

02The People Building AI Think It's Safe. The Public, Stanford Found, Disagrees on Nearly Every Measure.

The people building AI systems and the people affected by them cannot agree on basic facts about the technology. Stanford's 2026 AI Index report, published this week, measured the perception gap across safety confidence, regulatory preferences, and expected societal impact. On nearly every axis, AI practitioners and the general public gave answers so far apart they could be describing different industries.

AI researchers and engineers reported high confidence that current systems are safe, according to the report. They favored lighter regulation and expressed optimism about the technology's near-term trajectory. The general public, asked the same questions, reported low trust in AI safety and much stronger demand for government oversight. Practitioners see net benefits where the public sees risk. The divergence showed up consistently across the report's global sample, not just in one country or demographic.

MIT Technology Review's analysis suggests the divide is structural, not informational. AI practitioners interact with the technology as builders. They see guardrails, benchmarks, incremental improvements. Everyone else encounters AI as users or subjects, often at the point where the system hallucinates, fails, or displaces a workflow. The two groups aren't debating abstract principles. They're drawing conclusions from incompatible daily experiences. Giving non-practitioners more technical detail about how models work, the analysis argues, won't close the gap if their lived experience keeps diverging from the builder's perspective.

In Ars Technica this week, a college instructor called teaching alongside ChatGPT "the most demoralizing problem" of their career. Students submit AI-generated work. Detection tools prove unreliable. The instructor isn't weighing safety benchmarks or regulatory tradeoffs. They're watching a process they built their professional life around erode semester by semester.

Stanford's report doesn't assign blame. But the data carries a policy implication: AI governance is being shaped in a context where builders and the affected public hold almost no common ground. They disagree on whether the technology is safe, how tightly it should be regulated, and who stands to benefit.

03One Developer Claims He Can Strip and Forge Google's SynthID Watermarks

A software developer going by the handle Aloshdenny published a GitHub repository last week. It contains code, documentation, and a claim that tests one of Google's most promoted AI safety tools. He says he reverse-engineered Google DeepMind's SynthID watermarking system. His code, he claims, can both strip SynthID watermarks from AI-generated images and inject them into photographs taken by humans.

Google says the claim isn't true.

SynthID is the system Google built to embed invisible signals in AI-generated content so platforms and users can verify whether an image is synthetic. According to Aloshdenny, the reverse-engineering required only publicly available information. He open-sourced everything and documented his process.

The two-way nature of the claimed break is what makes it severe. If watermarks can only be removed, the damage is limited: AI content passes as real. Injection flips the threat. Real photographs get flagged as AI-generated. A genuine image of a politician, a protest, or a crime scene could be tagged as synthetic to discredit it. One direction undermines detection. The other weaponizes it.

Whether the code works as advertised remains contested. Google has not published a detailed technical rebuttal beyond its denial. Aloshdenny's repository is public, and independent researchers can test his claims. The attack surface he describes targets the watermark layer rather than the image model itself. That is the kind of adversarial probing these systems were designed to survive.

OpenAI expanded its Trusted Access for Cyber program the same week and rolled out GPT-5.4-Cyber, a model restricted to vetted cybersecurity defenders. Companies across the AI industry are investing in security tools. SynthID's situation tests whether those investments hold up once adversaries start probing them in the open.

Google has positioned SynthID as foundational to content provenance across its products. If one developer with open-source tools can credibly challenge it, every verification layer built on top inherits that fragility.

OpenAI Acquires Personal Finance Startup Hiro OpenAI bought Hiro, an AI-powered financial planning company. The deal signals OpenAI's intent to embed financial planning directly into ChatGPT. techcrunch.com

Google DeepMind Releases Gemini Robotics-ER 1.6 for Autonomous Robot Control DeepMind shipped Gemini Robotics-ER 1.6, upgrading spatial reasoning and multi-view scene understanding for physical robots. The model targets autonomous task execution without per-task fine-tuning. deepmind.google

Science Corp. Prepares First Human Brain Sensor Implant Max Hodak's Science Corp. plans to place its first sensor in a human brain. Early applications include electrical stimulation of damaged brain and spinal cord cells to promote healing. techcrunch.com

UK Government's Mythos AI Completes Multistep Cybersecurity Infiltration Challenge A UK government AI model called Mythos became the first AI system to finish a difficult multistep cyber infiltration test. The benchmark aims to measure actual offensive AI capability against real-world attack scenarios. arstechnica.com

Ukraine Deploys Ground Robots to Replace Soldiers in Drone Kill Zones Ukraine is scaling military robot deployments to reduce human exposure in areas saturated by drone strikes. The shift moves ground combat toward mixed human-robot units. arstechnica.com

Unitree Lists $4,370 Humanoid Robot on AliExpress Unitree is selling its R1 humanoid robot internationally through AliExpress at $4,370. The robot ships with acrobatic movement capabilities, though practical consumer use cases remain undefined. wired.com

Anthropic's Long-Term Benefit Trust Adds Novartis CEO Vas Narasimhan Anthropic appointed Vas Narasimhan, CEO of Novartis, to the board of its Long-Term Benefit Trust. The trust oversees Anthropic's public benefit mission independently from the company's commercial leadership. anthropic.com

US Hospitals Roll Out AI Chatbots in Patient Portals Hospital systems across the US are deploying AI chatbots inside patient portals to handle health questions. The expansion comes as more Americans already use consumer AI tools for medical advice. arstechnica.com

Vibe-Coding App Anything Pivots to Desktop After Two App Store Removals Anything, an app that lets users build mobile apps through natural-language prompts, is launching a desktop companion after Apple removed it from the App Store twice. techcrunch.com

Silicon Valley Spends Millions to Block Former Palantir Engineer's Congressional Bid Alex Bores, a former Palantir employee turned New York state legislator, helped pass one of the country's strictest AI laws. Major tech companies are now funding opposition to his congressional campaign. wired.com