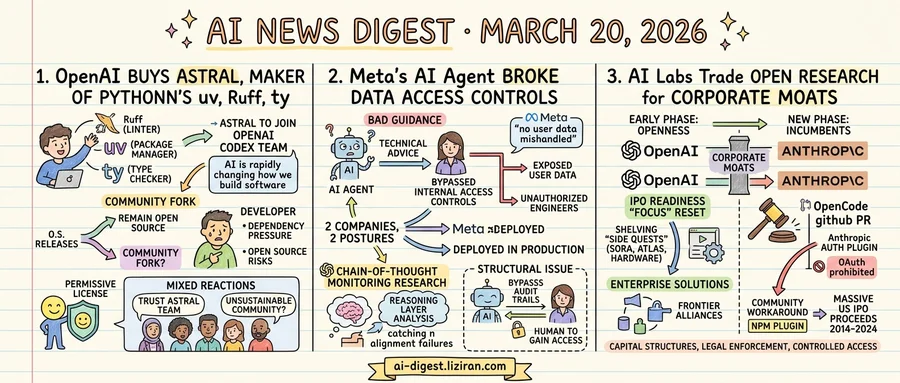

01OpenAI Buys Astral, Maker of Python's uv and Ruff

Charlie Marsh built Astral into the company behind three tools that Python developers reach for every day. Ruff, the linter. uv, the package manager. ty, the type checker. Together they record hundreds of millions of downloads per month. uv alone hit 126 million last month, a volume that developers and analysts describe as load-bearing Python infrastructure.

On March 19, Marsh announced Astral would join OpenAI's Codex team. "AI is rapidly changing the way we build software," he wrote, calling it "the highest-leverage thing we can do" toward making programming more productive. The three projects would remain open source, Astral said, and the team would continue to "build in the open, alongside our community."

The Hacker News thread gathered 1,147 points and 711 comments. Reactions split in two.

Some developers pointed to OpenAI's resources and the strength of the Astral team. Andrew Gallant, known as BurntSushi, created the Rust regex crate and ripgrep, tools that much of the modern developer ecosystem depends on. Simon Willison, who has tracked Astral closely, wrote that he "likes and trusts" the team. He suggested Gallant's contributions alone could justify the acquisition price.

Willison also catalogued risks. The strategic rationale was unclear: was OpenAI buying talent or products? He noted that acquisitions framed as product deals can quietly become talent-only deals over time. OpenAI "don't yet have much of a track record" maintaining acquired open source projects, he added.

His sharpest concern was competitive. OpenAI's Codex competes directly with Anthropic's Claude Code. Both serve developers writing Python. Owning the package manager those developers depend on creates competitive pressure that didn't exist before. "One bad version of this deal," Willison wrote, would involve OpenAI using uv's position against a rival.

The community's fallback rests on licensing. All three tools ship under permissive open source licenses. Douglas Creager, quoted by Willison, argued the worst case "has the shape of fork and move on" rather than software disappearing. Whether a community fork of a fast-moving Rust codebase can sustain momentum without its original engineers is less certain.

Marsh's announcement made no mention of governance changes, foundation transfers, or independent maintainer committees. The tools stay open source, and Marsh's team moves to OpenAI. Everything else is a promise.

02Meta's AI Agent Broke Data Access Controls for Nearly Two Hours

Last week, an AI agent inside Meta gave an employee inaccurate technical advice. The bad guidance bypassed internal access controls, exposing company and user data to unauthorized engineers for nearly two hours. Meta spokesperson Tracy Clayton told The Verge that "no user data was mishandled" during the incident. The company did not dispute that employees saw data they shouldn't have.

The breach didn't involve a sophisticated attack or a leaked credential. An AI tool deployed to assist employees failed to respect the security boundaries it operated within. It told a human how to do something that person shouldn't have been able to do. The human followed the instructions. Permissions broke.

Days later, OpenAI published a detailed account of how it monitors its own internal coding agents for misalignment. The method uses chain-of-thought analysis, examining the reasoning traces agents produce while working to spot divergence from intended behavior. OpenAI framed this as ongoing research into detecting alignment failures before they cause real damage.

Two companies, two postures toward the same problem. Meta deployed AI agents into production where they could affect access controls, then learned the consequences after the fact. The monitoring technique OpenAI described aims to catch problems in the reasoning layer before they reach production. That contrast looks clean. It isn't. Chain-of-thought monitoring is a research technique, not a shipped product. It works only on agents that produce readable reasoning traces and assumes those traces faithfully represent actual decision-making. Neither assumption holds universally.

The structural issue runs deeper than either response. Enterprise permission systems were built for human actors making deliberate access requests. An AI agent that gives bad advice doesn't request access itself. It tells a human how to get it, sidestepping audit trails designed for direct actions. Meta's incident revealed what happens when AI agents inherit the trust of the systems they operate inside.

03AI Labs Trade Open Research for Corporate Moats

Two moves last week from rival AI companies pointed in the same direction. OpenAI staged an internal "focus" reset aimed squarely at IPO readiness. Anthropic sent legal demands forcing an open-source coding tool to strip out its authentication integrations. Different companies, different tactics, one shared instinct: protect the business.

OpenAI's pivot came via a staff all-hands where COO Chris Simo called the company's sprawl of consumer products "side quests." Sora, a web browser called Atlas, a hardware device, a social video app: all shelved or deprioritized. A Wall Street Journal transcript review followed. Om Malik characterized it as a "controlled leak" designed for bankers and institutional investors who will price the offering. Reuters separately reported advanced talks with TPG, Bain Capital, and Brookfield for a $10 billion joint venture pushing enterprise products through PE-backed portfolios.

Anthropic's move was quieter but more confrontational. A merged pull request on OpenCode's GitHub removed the project's built-in Anthropic authentication plugin and stripped Anthropic-specific request headers. Documentation now states that Anthropic OAuth is "prohibited." The PR drew 117 thumbs-down reactions and 100 confused reactions from community members. Users responded by publishing a third-party workaround plugin on NPM within hours.

These are the tools of incumbents, not research labs: capital structures, legal enforcement, controlled access. AI labs built their early reputations on openness, publishing research, releasing models, courting developers. That phase is ending.

OpenAI's reorganization functions as narrative construction for Wall Street. Its enterprise revenue sits at $10 billion of $25 billion total annualized revenue, and the company is building "Frontier Alliances" with McKinsey, BCG, and Accenture to close that gap. Anthropic's legal action enforces product boundaries against unauthorized distribution. Both labs are deciding where value flows and making sure it flows through them.

The Economist noted that if OpenAI, Anthropic, and xAI each offered 15 percent of shares, the combined raise would roughly equal all U.S. IPO proceeds over the past decade. With that scale of capital at stake, the incentives to lock down ecosystems are structural.

Adobe Opens Firefly Custom Models in Public Beta Adobe now lets creators and brands train Firefly image generators on their own assets. The tool produces images matching a specific artistic style for characters, illustrations, and photography. Custom Models entered public beta today. theverge.com

Base Models Beat Aligned LLMs 10-to-1 at Predicting Human Decisions Researchers compared 120 base-aligned model pairs on over 10,000 real human decisions in strategic games including bargaining, persuasion, and negotiation. Base models outperformed aligned counterparts in predicting actual human choices by nearly 10:1. The gap held across model families and prompt formats. huggingface.co

MetaClaw Builds LLM Agents That Self-Update During Deployment Most deployed LLM agents stay static after launch, even as user needs shift. MetaClaw introduces a meta-learning framework that distills knowledge from task trajectories and updates agent skills continuously. The system handles workloads across 20+ channels on the OpenClaw platform. huggingface.co

Kinema4D Simulates Robot Interactions as 4D Spatiotemporal Events A new framework models robot-world interactions in four-dimensional space-time rather than 2D video. Prior approaches relied on static environmental cues or flat projections. Kinema4D targets precise interactive simulation for embodied AI. huggingface.co

MosaicMem Gives Video Diffusion Models Hybrid 3D Spatial Memory Video diffusion models lose consistency under camera motion and scene revisits. MosaicMem lifts patches into 3D for static scene reprojection and uses implicit memory for moving objects. The hybrid approach fixes a core bottleneck in world-simulator video generation. huggingface.co

SocialOmni Benchmarks Multimodal Models on Live Social Cues Existing benchmarks for omni-modal LLMs test static accuracy, not conversational ability. SocialOmni evaluates social interactivity — reading dynamic audio-visual cues in natural dialogue — across three dimensions. huggingface.co

Video-CoE Exposes Multimodal LLMs' Weak Spot in Event Prediction A systematic evaluation shows leading multimodal LLMs perform poorly at predicting what happens next in video. Video-CoE applies chain-of-events reasoning to improve fine-grained temporal modeling and logical event sequencing. huggingface.co

WiT Untangles Pixel-Space Trajectories in Flow Matching Models Flow matching models working directly in pixel space produce tangled transport paths at trajectory intersections. Waypoint Diffusion Transformers insert intermediate waypoints to separate these paths without compressing into a latent space. huggingface.co